The Best Google SEO Penalties Articles We’ve Written

IRS.com, DMV.org, and Medicare.com Lose 80% of Search Visibility

Note* – These are NOT government sites

https://www.sistrix.com/blog/irs-com-dmv-org-suffer-huge-visibility-losses-suspected-google-manual-penalty/

Holy sh*t this is brutal. I wouldn’t wish this on my worst enemies (unless they were my SERP competitor). In one smooth stroke, Google wiped millions of monthly visits from three SEO Giants.

Look at this carnage:

IRS.com:

DMV.org:

Medicare.com

Why did this happen? Who knows, but probably a Google manual penalty against sites that are misleading to visitors (none of these sites about government programs are official government sites, and Google probably doesn’t want to have a hand in misleading visitors, so it’s a preemptive slap before they’re slapped by regulators, maybe? That said, some of the sites are pretty up-front about not being official, but doubtful everyone pays attention to that…) — here’s the Sistrix.com take:

Is there a government action behind this? Will the two domains be able to recover? Do the two companies even know this has happened?

Yes, there could be a technical explanation that we’ve missed so we advise caution. It is possible that the content inside the pages has been made invisible to Googlebots. But for two three companies, both running ‘ghost-like’ US government-related websites, to see the same pattern seems like too much of a co-incidence to us.

Definitely a story to keep an eye on.

Getting Your Penalties Straight

https://www.searchenginejournal.com/seo-guide-panda-penguin-hummingbird/169167/

SEO penalties suck, but in 2016, they are fairly avoidable. Still, in your eagerness to rank a site, it’s easy to misstep and do something stupid that could bury your site.

Oops.

I’m a fan of reviewing common knowledge to make sure all the important things stay top-of-mind, and this is a great post for it.

Technical SEO also does not play any role in Panda. Panda looks just at the content, not things like whether you are using H1 tags or how quickly your page loads for users.

If you’re an SEO beginner, or a business looking for more info on how to create a site that plays nicely with Google, this post is a nice, safe starting point.

Note* Although Matthew says that technical SEO does not matter, we like to recommend everyone keep user experience in mind first. If your user experience metrics suck, then penalties are more likely to occur. If your user experience is phenomenal, then sites tend to get more of a get out of jail free card.

Why You Cannot Fix Your Penguin Site

http://www.seo-theory.com/2016/08/04/google-penguin-why-you-cannot-fix-your-website/

It’s been a long time since Google updated their Penguin algorithm.

Do you have a site sitting in Penguin Purgatory (Penguitory?) that you keep disavowing links and adding fresh, amazing content to?

This article makes the case that it’s time to let that site go, and start fresh somewhere else.

You are not nearly as tired of hearing this from me as I am of telling you. Stop waiting for Google to “fix” Penguin. You don’t even know if you have tracked down all the links that Penguin declared to be toxic. Many of you have clearly been disavowing or removing very good links that were helping your sites.

When the Penguin rolls out again (assuming it does as Google believes it shall) some people will be very happy but I am convinced on the basis of past experience that way too many people are going to be immensely disappointed with the results.

It doesn’t make sense for Google to be transparent on what links are triggering the algorithm, and you might put in hours and hours and hours trying to reverse engineer the penalty only to have the update happen and not have done a good enough job to lift the penalty…

Outbound Link Penalty: Don’t NoFollow All Links!

http://www.thesempost.com/dont-nofollow-all-links-for-outbound-link-manual-action-recovery/

If you got penalized with the recent outbound-link manual action you might be thinking:

I’mma go and NoFollow every link on my site. Google loves NoFollowed links…

Hang on a sec.

According to SEM Post, John Mueller (J Mu?) wrote this:

There’s absolutely no need to nofollow every link on your site!

When/If you get penalized by Google, don’t react like you saw a spider on your pillow.

Instead, keep an eye on the chatter, make sure to understand the reason for the penalty as best you can, and THEN take action.

Don’t start editing your website hoping you do something that pleases Google.

Just a friendly PSA…

Google Penalizing for Unnatural Outbound Links

http://searchengineland.com/google-penalizes-sites-unnatural-outbound-linking-247001

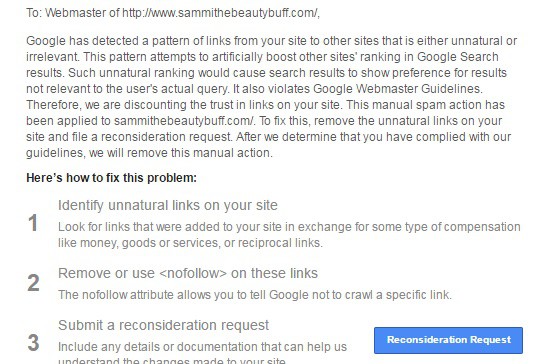

Over the weekend Google sent out manual action penalties to some webmasters for “unnatural outbound links.”

Here’s one such message:

The interesting thing is that the site itself isn’t being penalized by falling in the rankings. Check out the message to see:

Google has detected a pattern of links from your site to other sites that is either unnatural or irrelevant. This pattern attempts to artificial boost other sites’ ranking in Google Search results. Such unnatural ranking would cause search results to show preferences for results not relevant to the user’s actual query. It also violates Google Webmaster Guidelines. Therefore, we are discounting the trust in links on your site. This manual spam action has been applied to domain.com. To fix this, remove the unnatural links on your site and file a reconsideration request. After we determine that you have complied with our guidelines, we will remove this manual action.

If you find yourself hit with this action, here’s the official “next steps” from Google.

Structured Data (Schema) Penalties

So this is a new thing. Having “spammy” structured markup can bring you a penalty, like how keyword stuffing used to work really well, until your ass got wiped off that face of the web.

Markup on some pages on this site appears to use techniques such as marketing up content that is invisible to users, marking up irrelevant or misleading content, and/or other manipulative behavior that violates Google’s Rich Snippet Quality guidelines.

The Search Engine Land article goes into how to prevent this and some thoughts on recovery. Definitely worth a read.

How to Easily Recover From a Google Penalty: Be Backed By Google Ventures

So here’s the timeline:

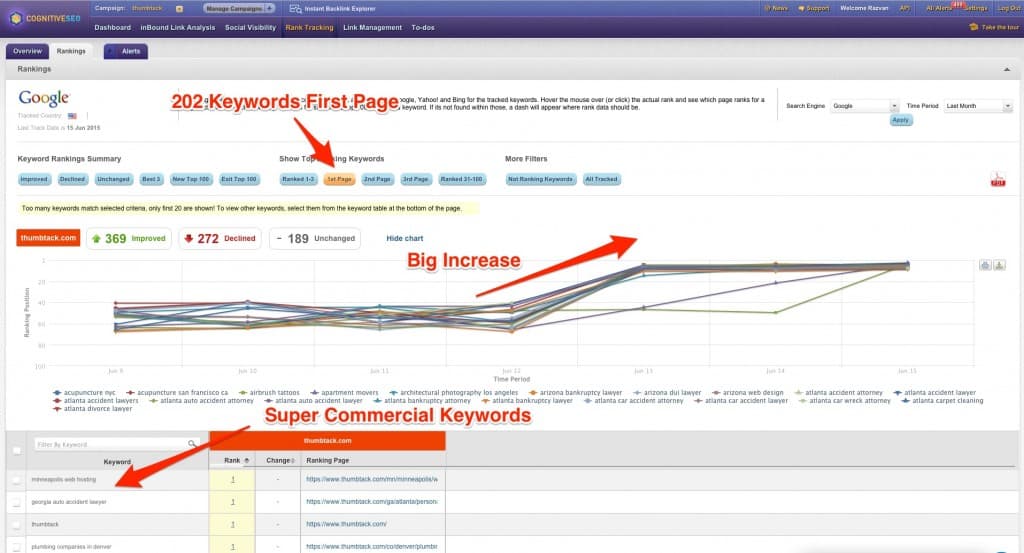

On June 6th, 2015, the site GoodOnMaps published a post called Is Google Making Money on Organic Search? This post essentially pointed out how Thumbtack, a Google Venture-backed company, has been sending emails offering incentives to customers in return for some juicy exact-match anchor text links.

On June 8th, 2015, Search Engine Land ran a story saying Thumbtack’s co-founder, Jonathon Swanson, went on the record saying they were indeed penalized, but made sure to point out that they never “bought” a link.

On June 15th, 2015, Search Engine Land ran another story saying that the manual penalty was removed “late last week” and that Thumbtack’s traffic has returned to pre-manual-penalty levels. CognitiveSEO shows us the hard data:

The plus side of getting a high profile Google penalty, is all the brand mentions and links from authoritative news sites. Well played, Thumbtack (like that!).

Google Hands Out Manual Thin-content Penalties Monday Morning

https://www.seroundtable.com/google-manual-actions-thin-content-20279.html

The opposite of a golden ticket, but this time the “Thin Content Penalties” are not about affiliate sites. Many people reported getting this message in their web master tools over the weekend as Google cracked down on a possible content network (though many people wrote in to say that they had not, in fact, been part of a network).

It seems Google went after a content network and located many of the sites participating in this network and then slapping them with thin content manual actions. I do not have confirmation from Google but I received a couple notes about it over the weekend from anonymous sources and there are many threads in the various forums with people complaining about these thin content actions.

Searchmetrics chimed in via the comments with some really interesting preliminary data:

Here are four examples with screenshots of their SEO Visibility (a metric that indicates ranking performance and eventually traffic):

– lef.org (-89%)

– arthritistoday.org (-87%)

– myrecipes.com (-21%)

– moviefone.com (-25%)

Causes for the drop I found are:

– Duplicate content by indexed paginations (no rel=prev / next or meta noindex used)

– Thin content by indexed hub pages that just tease articles but own no comprehensive copy on a large scale

– Thin content by short news articles

– Thin content by pages with only images and no copy

And some “search visibility” graphs to back it up.

Ouch…

Manual Penalty Removal: An Ahrefs Case Study

https://blog.ahrefs.com/manual-penalty-removal-ahrefs-case-study/

Ahrefs successfully performed a manual penalty removal using their site explorer tool, and documented the process as a tutorial. They worked with a site that already had a manual penalty, and found spam links using their search tool while analyzing the site’s backlink profile. They sorted sites by domain authority and looked at websites with a score of 40 or less. Along with that, they sorted the anchor-text data and noted sites with a high percentage of the same text. The process was subjective, and sites they deemed as spam were marked down on a list. The list was then used to perform outreach, requesting the links in question be removed. Along with reaching out to site owners, they used Google’s disavow tool and submitted a reconsideration request. The whole process took 3 months, and in the end they successfully removed the manual penalty.

From the article: “In many cases, we had to send multiple outreach messages (up to 6 per/domain) in order to get a website owner’s attention. In other cases, there were no responses at all. Sometimes site owners requested a bounty for removing links. This is simply a request for payment and in this case study, no bounty ever exceeded 20 dollars. For our case study, the outreach lasted for about 2 months. We sent 6 emails and/or contact form messages per violating domain.”

How To Recover From a Google Panda Penalty

Let’s face it. Pandas are evil. I know they look all cute n’ cuddly, but that’s their secret weapon. They wait for you to let your guard down, then they ferociously strike when you least expect it, bringing pain and agony to us innocent bystanders. Still not buying it? Take this handy dandy Evil Panda Quiz to edumacte urself.

As many of you might already know, the latest Panda algorithm update was just released to version 4.0.

So, If you are a victim of a panda attack, worry not. Here are the best steps for getting yourself out of Big-G’s bad graces, and back to making coin slangin’ your weight loss rebills and Clickbank ebooks in no time (I won’t judge).

I’m going to briefly cover 3 things here:

- What Panda is and what it looks for.

- The top mistakes we commonly see.

- Three unique tactics for ditching the penalty.

1) What The Heck Is It?

For the sake of brevity, Panda is more or less an on-site filter designed to punish so called “low quality” websites. I say “filter” instead of the more commonly used “penalty” because it tends to act more in that manner, although if you asked some recently spanked webmaster they might be willing to vigorously argue that point.

The way it works is that Panda has this “shit list” of stuff you’re not supposed to do. For an example having poor content, too many ads, and a bad click-through-rate. You can usually get away with a little here or there, but once your site has “checked off enough boxes” from the no-no-list, your entire domain is now filtered in a negative way. This is why websites hit by Panda tend to lose rankings all across the site, instead of just a few individual pages.

Here Are The Basics Of What Panda Looks For:

- Duplicate content. How many times do I have to preach this? Just use unique content already.

- Thin content throughout the site. Too many pages without substance.

- Spun content.

- Poor click through rate. People don’t click on your result in Google.

- Poor bounce rate for certain niches “POGO effect”. Your visitors immediately leave your site and go to the next one.

- Too many ads above the fold. Do visitors see nothing but ads at first when visiting your site?

- Unrelated ads.

- OVER-optimization of keywords.

- Same content throughout the site.

- Overly slow page speed.

- Cloaking.

- Messy internal linking, and a mass overuse of internal link “money keyword” tags.

- Poor coding on a mass scale

- Lack of social signals. I say this is niche dependent if it’s true. No one is sharing their herpes treatment on facebook.

2) Why A Panda Is Probably Picking On You:

So as you can see there is a lot of stuff to take into consideration. If you’re one of the top sites that got creamed then this can be a very large and painful process to restore rankings. Dealing with massive websites, user generated content, etc.

Luckily though, if you’re reading this you’re most likely not a ginourmous site with a million users. You’re probably a small business with a perfectly manageable site. In my team’s experience, we usually see the same correctable mistakes over and over from the average small business owners. Here are the top 4.

You’ve outsourced your article writing, and your writer is scamming you.

- A lot of shady writers write a unique first paragraph, and then just copy and paste the majority of the rest from a few different sources.

- Check your content using CopyScape. I personally like to copy a couple of complete sentences of my content and paste them in the google search box inside of “quotation marks”. This searches the exact sentence for duplication.

- Also some spammers might be scraping and using your content elsewhere. Maybe even completely duplicating your site at the code level. This devalues your website unless you’re already a big authority. If you’re on wordpress, you can use the blog content protector plugin to reduce this (hats off to Cody for the plugin tip).

- If this is the case, your site is full of duplicate content. Fix this asap.

Your content is hard to find on the page.

- Too many ads

- Quote forms covering the top half of the page

- etc.

- Make sure your content is prioritized.

You have a lot of thin content pages

-

- I would guess this is the biggest problem overall. This can be innocent, as you just have pages that aren’t really for the bulk of your traffic, but more for maintenance. Or perhaps you have some thin user generated pages. A million reasons this can happen.

- Some statistic that I can’t verify said if as little as 30% of your site is thin it can bring the bad bear. You do the math.

- Easy fix, just go de-index them, and even block the robots from crawling the page. They don’t have to be deleted.

- On wordpress sites, the Yoast WordPress SEO plugin makes it super easy to not index a page if you’re not technical. Just click “Advanced Options”, and set the drop-down to “no-index”

Your website is from 1990.

- Small businesses, especially ones that exist in the physical world, tend to have severely outdated sites.

- These sites can have structural problems, and are hard to navigate

- PLUS they don’t have any trust. Users don’t stay.

- Update already. I use WordPress for 95% of my businesses & recommend it for most others.

Of course all of the other Panda factors play here, but these are the main ones that we see over and over again at Smash Digital. Get to work peeples.

3) How To Finally Kill That Panda:

Ok, enough with the history lesson. Now we want to recover your sites rankings. I’m going to present to you some strategies that can and have worked. Remember though, NOTHING IN SEO IS EVER GUARANTEED. The below tips are only POSSIBLE solutions, as we are trying to game the system here to speed up your recovery time, and get your traffic back. Unfortunately sometimes it works, but sometimes it doesn’t.

Last but not least, I’m going to assume that you already corrected the problems that caused the Panda Attack. It’s useless otherwise.

YOU CANNOT SKIP THIS CORRECTION STEP (sorry to yell, but this point is important). On to your options!

The “Standard” Option”

Wait For A Refresh

- This is boring and not fun to write about, but this is the standard advice. Correct your problems, wait for the next Panda refresh, and hope for the best. In theory it should always work, but in practice there are times it doesn’t, and can take FOREVERRRRRrrr until it refreshes sometimes. Some sites will be good to go within a month after correcting the problems, some might never bounce back for whatever reason.

The More Advanced Options For Those Who Can’t Wait

301-Redirect To A New Domain

- This can be the quickest route to recovered traffic when it works, and has the best chances of success if speed is necessary. If your Panda-fried domain is not overly important to you and is not branded, this is what I would try first.

- Copy the site to a new domain. Leave it deindexed and block all bots.

- Correct the problems that caused the penalty.

- 301 the original site to the new one on a page-by-page basis.

- Make the new site indexable and crawlable.

- Tell webmaster tools. HERE is the Google guide.

- Pray to the Google Gods.

Deindex Your Domain, Then Bring It Back

- If your brand name is important to you, this might be worth a shot.

- Completely deindex your domain. Block the bots and tell Webmaster Tools.

- Correct all the problems you found.

- Wait until all of your site has left Googles cache.

- Re-index the site and hope for the best.

The theory with the last two options is that you’re more or less bringing “a new domain” into the picture as far as Google is concerned. And as far as they are concerned this new site doesn’t have any of the bad qualities that it doesn’t like, but yet still has all of the link juice that it’s looking for since your backlinks are already there.

* Bonus tip I like to send fake social signals (likes/tweets/+’s) to the new revived/301’d domains once they are ready to go. No downside, high potential upside type of thing.

[convertpress id=”1774″ replacetheme=”false”]

Comment Below!

I would love to hear what has worked for you, success stories, writer appreciation, marriage proposals, and more below. I write back!

Also, don’t forget to subscribe to my list! I’ll send out the real goodies there. Not everything is for the public.