The Best Technical SEO Articles: Our Collection

Another Broad Core Algorithm Update

https://www.seroundtable.com/march-12-13-google-search-ranking-algorithm-update-27249.html

Hold on to your asses, the SERPs are getting a little bit wild again.

Recently, Google confirmed the roll-out of a broad core algorithm update (which is what the “Medic” update really was) on March 12th. Whether your traffic came crashing down or shot up into space, these broad core algo updates aren’t subtle.

The solution to ranking well again, if your traffic fell? Same as before:

We understand those who do less well after a core update change may still feel they need to do something. We suggest focusing on ensuring you’re offering the best content you can. That’s what our algorithms seek to reward….

— Google SearchLiaison (@searchliaison) October 11, 2018

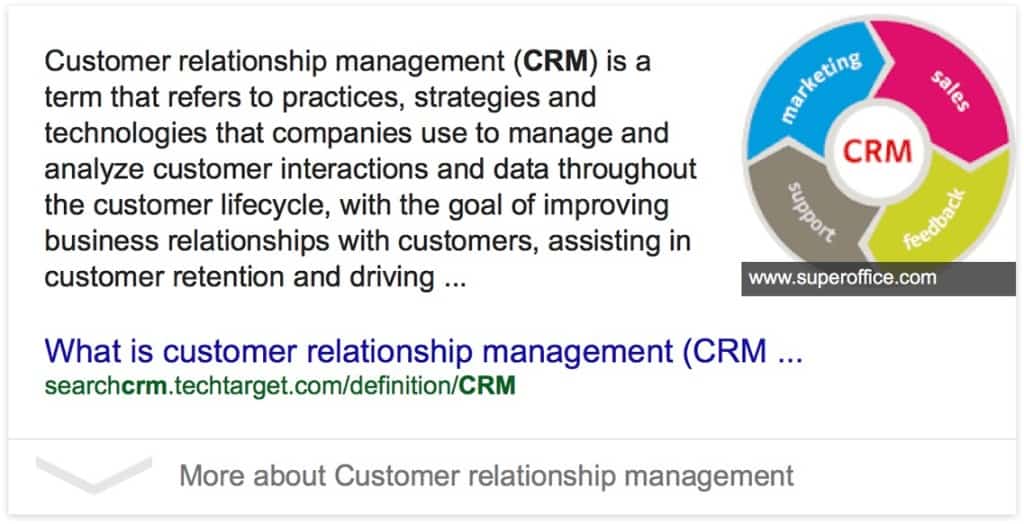

A Glimpse At The Bleak, Post-Apocalyptic Future of SEO

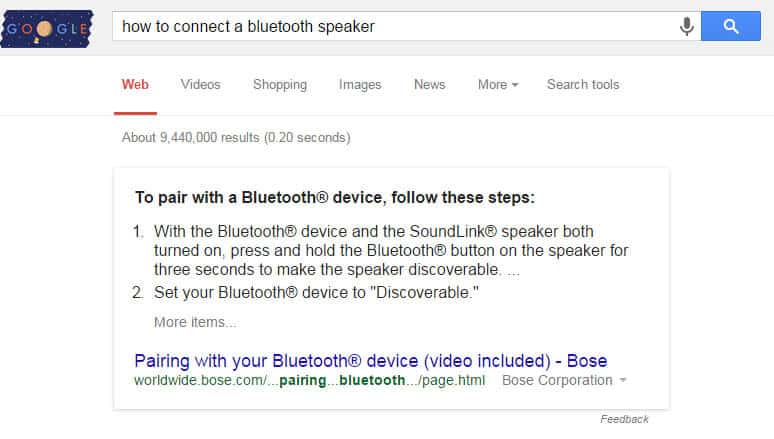

This is an incredible search result from Google:

• Answers a fairly complex question

• Takes the copy of many 3rd party publisher to create its own independent web page

• Zero visible on-page links to the publishers who provided the dataThis is the future of Google Search pic.twitter.com/txNU2Z7HB5

— Cyrus (@CyrusShepard) February 23, 2019

Google Says: Disavowing Bad Links Could Increase Trust

https://www.seroundtable.com/google-trust-algorithmic-links-27014.html

This seems like a pretty important point that most people paying attention to their backlinks should tuck away for the future.

Google’s John Mueller said in a webmaster hangout on Tuesday at the 16:44 mark that in some cases, disavowing or cleaning up bad links to your site may help Google’s algorithm trust other links to your site.

So if you’ve been on the fence about whether or not to disavow those crappy links a disgruntled competitor sent your way, maybe this pushes you over the edge. Here’s the video where you can watch the conversation:

The August 1st Core Algorithm Update

(“Medic” SEO Update)

On August 1st, Google rolled out a core algorithm update, which means they made a change to how the algorithm scores and values the many factors that determine how well a site does or does not rank for a given keyword.

Google is constantly pushing out small updates to its algorithm to try and improve the results it serves to searchers, but this was one of the biggest many SEO experts had ever seen.

If you don’t follow SEO-related news closely, chances are you probably noticed a change to your site’s traffic sometimes from August 1st – August 8th. Whether it increased or declined, the August 1st update had some pretty big impacts for a lot of sites.

According to data gathered by SEO publication sites (and a ton of chatter and first-hand accounts on site where SEOs hang out), this update seemed to target sites related to the health industry and related keywords. However, health was just one industry of many that was affected.

Here’s a graph from SERoundtable that that pulls a bunch of info together to show which industries were most affected:

Expert Speculation and What Google Says

This core algorithm update–dubbed the Medic Update by an industry news site because it largely targets health-realted sites–took a solid week to fully roll out.

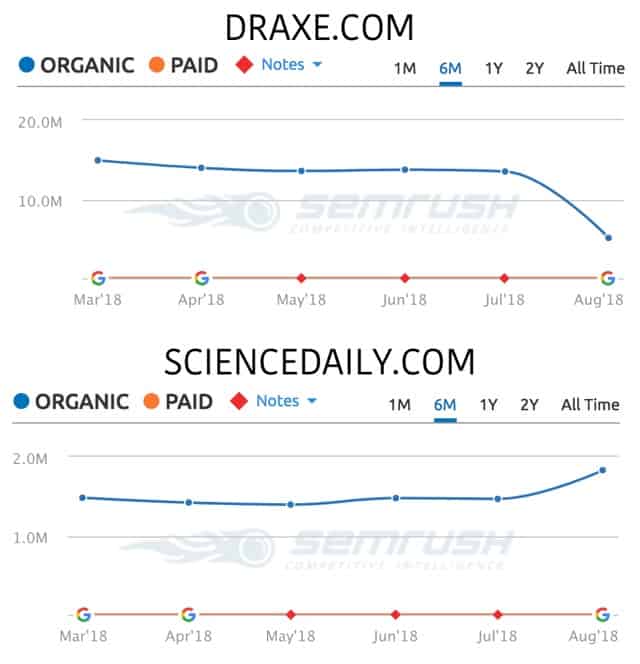

Sites that pushed “alternate health” advice saw the biggest initial drop. Examples include DrAxe.com and Prevention.com.

This led several experts to push the idea that Google had tweaked their algorithm to reward sites that are true authorities relating to the healthcare industry. In Google’s Quality Rater Guidelines (QRG), a document that spells out what does (and does not) constitute a high-quality site, Google spells out the importance of E-A-T:

Expertise. Authority. Trust.

The initial takes on the update pointed to sites like DrAxe.com losing organic traffic rankings, while sites with more “traditional” authority in the medical industry, like (the health section of) ScienceDaily.com gaining a significant amount of organic traffic through better rankings:

Marie Haynes pointed to the Google’s Quality Rater Guidelines–specifically the Trust part of the E-A-T acronym as to the reason why sites lost ground in their rankings:

If you run a [health related] site, the following are all going to be important factors in how you rank:

- Is your content written by people who are truly known as authorities in their field?

- Do your business and your writers have a good reputation?

- Are you selling products that are potentially either scams, not helpful, or even harmful to people?

If you are lacking business or author reputation or have products that don’t inspire trust, then re-establishing trust and ranking well again may be difficult.

Many were quick to jump on the E-A-T bandwagon to explain the drastically changed search results. However, focusing only on the matter of Trust and Expertise is to ignore many important factors that may impact a site. As Glenn Gabe wrote re: the update:

I highly recommend reading the QRG to see what Google deems high versus low quality, to understand how Google treats [health-related] sites, to understand the importance of E-A-T, to understand the impact of aggressive, disruptive, and deceptive ads, and much more.

But it’s not the only thing you should do. …don’t ignore technical SEO, thin content, performance problems, and other things like that. Think about the site holistically and root out all potential problems.

This is evergreen good advice when it comes to SEO.

Here’s what Google team member Danny Sullivan said about the update:

Want to do better with a broad change? Have great content. Yeah, the same boring answer. But if you want a better idea of what we consider great content, read our raters guidelines. That’s like almost 200 pages of things to consider: https://t.co/pO3AHxFVrV

— Danny Sullivan (@dannysullivan) August 1, 2018

What the update was targeting (and what to do about it)

Now that you understand the scope and a little bit about what this update targeted (trust, yes, but many other issues), you’re probably wondering what you can do about this update if you lost some ground.

The most important thing to remember when reading the next section is: this was a broad core algorithm update. The key is “broad.” It wasn’t just one thing. It’s not just targeting the medical/health niche, although they were hit particularly hard. It’s not just about query intent or site speed or content. It’s about ALL of them.

The second most important thing to remember when reading these updates from various SEOs: how does their advice relate to their product? Bias is a hell of a lens to view the world through, so just be aware of what’s on offer.

Is a particular ‘expert’ or agency really hammering, say, individual author authority as the biggest thing this update targeted? Do they happen to offer reputation management? If so, take their advice into consideration with the bias in mind.

At Smash Digital, we build links. And we’re really good at it. So just be aware of the bias that we think building powerful links is one of the most important things you can do for your business.

Also, we’re totally right about this, but keep that in mind when you listen to our take–and anyone else’s take–on the update.

With those points covered, let’s dig into what the August 1st update might have targeted and, if possible, what you can do about it.

1. For some queries, in some niches, the intent behind the query changed

For some queries–specifically related to medical niches–Google seemed to do the SERP equivalent of reaching over the table and mixing up your plate of food right as you were about to Instagram it.

I’ve seen several queries that went from being “transactional” in nature i.e. showing results assuming the person searching was looking to buy something to informational i.e. showing results assuming the person searching is looking for information. So if you’re an ecommerce site that used to rank a product page for a particularly valuable medical-related query, and Google (or their algorithm) changed the Query Intent to informational and are not showing products anymore, you gotta step up your content game.

If this is the case, try to write a “buyer’s guide” or similar educationally-focused post that teaches rather than sells. Flex your authority and trust by showing you’ve got the searcher’s best interest at heart, and are just trying to spread knowledge.

We’ve seen some early promising results where rankings have popped back up after dialing back the sales-talk, turning away from pushing a product and just letting the content teach–and that’s it.

So check your main keywords to see if the Query Intent of the results has changed.

2. What kind of page is ranking, and does your site match up?

This will be brief, as it’s similar to point #1.

Choose a page that lost some rankings and look at what URL Google is ranking for your site. Is it a fresh af blog post? Is it the homepage?

Now look at the top 10 and compare. If you notice something like, most of the pages in the top 10 are deeply categorized blog posts, but you’ve been trying to rank the homepage…

3. User experience matters. A lot.

This may come as shock, but Google doesn’t care about your site’s profit.

So if you need to be aggressive with ads to make enough to pay for all that beautiful epic content they want you to create, maybe say goodbye to your good rankings. If not now, soon.

Chances are, if you’re a big media site that’s ugly with deceptive ads, you probably got slapped in this last update.

Other obvious things that may have hurt your site (or will, if you don’t get it together):

- slowly-loading sites

- pop-ups that block content (especially on mobile)

- excessive ads

- autoplaying videos

- and other terrible experience.

4. All the obvious things you’ve heard

The update is not even a month old at the time this post is being written. Getting perspective on an update–especially one this big and far-reaching, can take months to years to fully understand. Of course, SEOs are relentlessly curious and data-curious, so there’s a lot it’s possible to piece together even only a few weeks out.

But the majority of what we understand about this update is ahead of us, not behind.

In the mean time, stick to (and follow!) the SEO advice you constantly hear. It’s your best guard against future updates.

Make sure your on-page SEO is solid.

Links are important; you need good ones.

Produce high-quality content that demonstrates your authority.

Link out to sources; don’t be outbound-link-greedy.

User experience is vital; make it good.

Need some help?

If you think you were impacted by the August 1st update and want to see if we’d be able to help, just hit up the contact page and I’ll get back to you ASAP.

Other reading on the subject that we enjoyed and learned from

- https://charlesfloate.co.uk/august-2018-algo-explained

- http://www.canirank.com/blog/core-algorithm-update-explained/

- http://www.canirank.com/blog/google-medic-update-data/

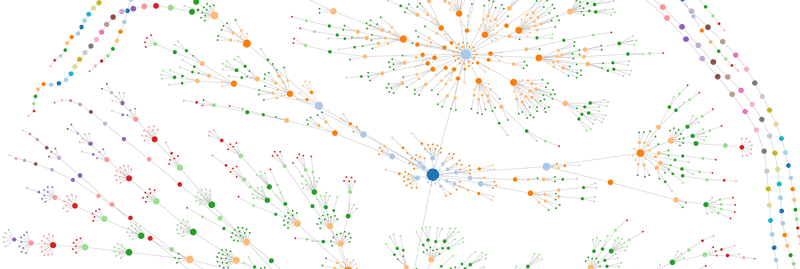

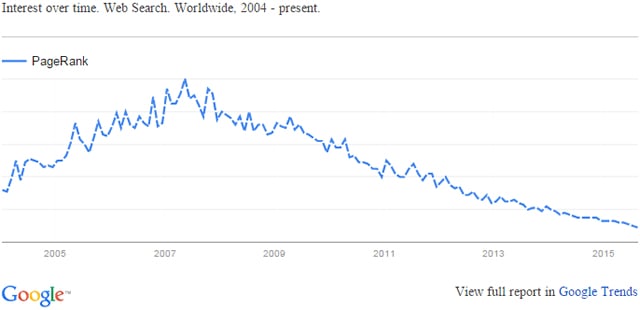

An Updated Page Rank

http://www.seobythesea.com/2018/04/pagerank-updated/

First off, yes Page Rank is still a thing. Google uses it within their ranking algorithm. The reason you probably haven’t heard of it for a few years is that they stopped publicly updating it.

Why?

Because SEOs used it help them rank sites or sell services and Google has no love for SEOs…

So what’s this about an updated Page Rank?

SEO By the Sea is covering a recent update to a previously-granted patent pertaining to Page Rank. It’s some pretty complicated stuff (or at least, is written that way):

One embodiment of the present invention provides a system that ranks pages on the web based on distances between the pages, wherein the pages are interconnected with links to form a link-graph. More specifically, a set of high-quality seed pages are chosen as references for ranking the pages in the link-graph, and shortest distances from the set of seed pages to each given page in the link-graph are computed. Each of the shortest distances is obtained by summing lengths of a set of links which follows the shortest path from a seed page to a given page, wherein the length of a given link is assigned to the link based on properties of the link and properties of the page attached to the link. The computed shortest distances are then used to determine the ranking scores of the associated pages.

Basically, Google is trying to use Seed Pages, and the distance a given site is (via links) from a Seed Page. It’s like six degrees of separation from some really authoritative pages, with links.

Google is Still Serious about HTTPS

https://www.blog.google/topics/developers/introducing-app-more-secure-home-apps-web/

Google just launched the new .apps gTLD and here’s a really interesting part of using their new domain ending:

key benefit of the .app domain is that security is built in—for you and your users. The big difference is that HTTPS is required to connect to all .app websites, helping protect against ad malware and tracking injection by ISPs, in addition to safeguarding against spying on open WiFi networks. Because .app will be the first TLD with enforced security made available for general registration, it’s helping move the web to an HTTPS-everywhere future in a big way.

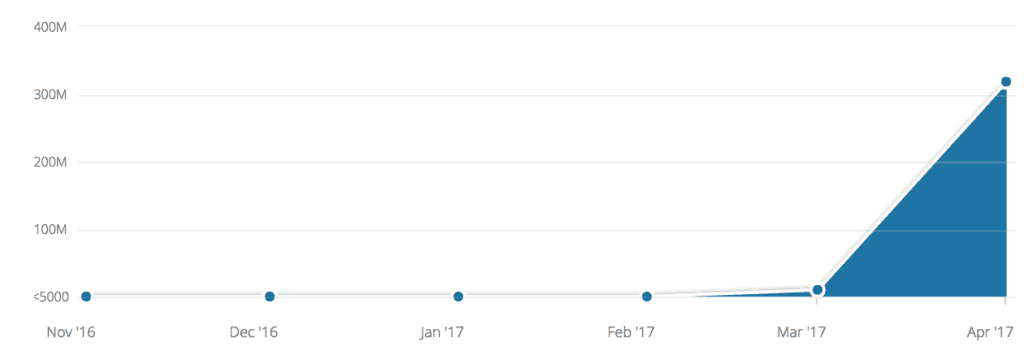

The Ultimate Black Hat SEO Bug (Now Squashed)

http://www.tomanthony.co.uk/blog/google-xml-sitemap-auth-bypass-black-hat-seo-bug-bounty

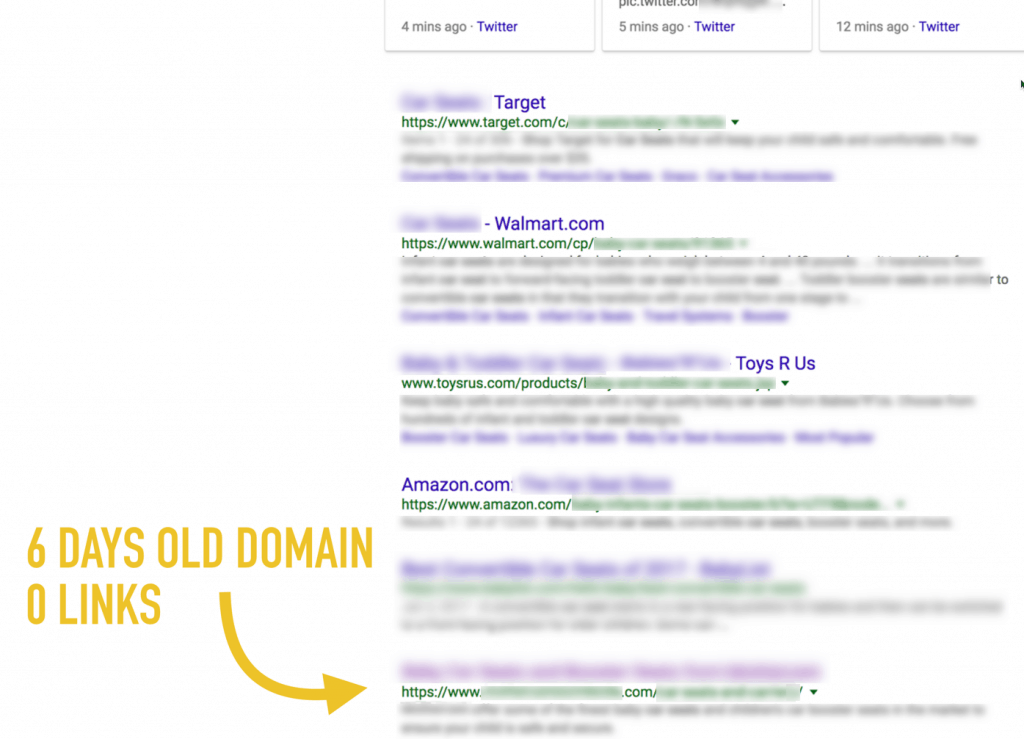

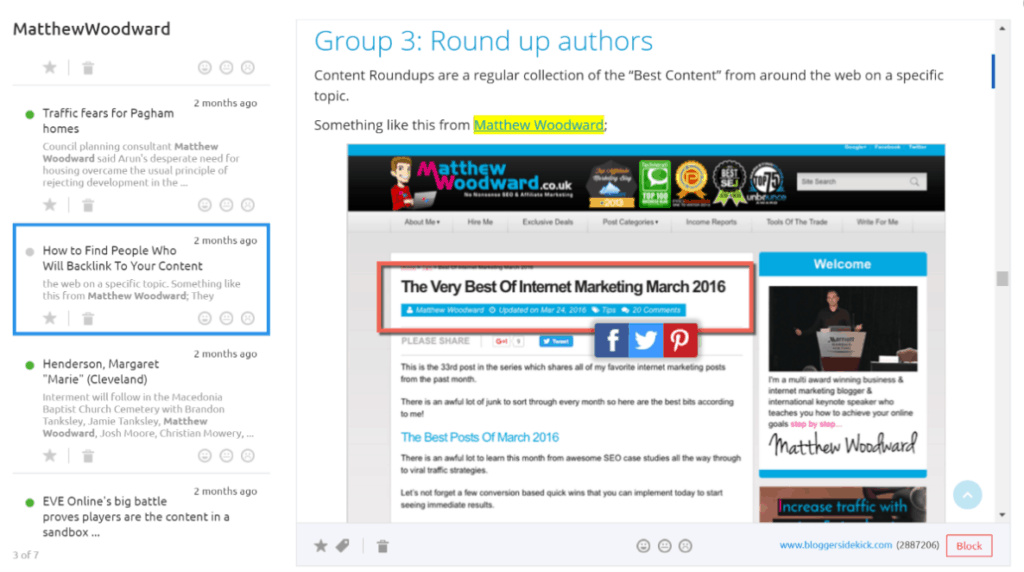

Recently, Tom Anthony discovered a way to rank a brand new site for some crazy-valuable keywords at the top of the SERPs:

I recently discovered an issue to Google that allows an attacker to submit an XML sitemap to Google for a site for which they are not authenticated. As these files can contain indexation directives, such as hreflang, it allows an attacker to utilise these directives to help their own sites rank in the Google search results.

I spent $12 setting up my experiment and was ranking on the first page for high monetizable search terms, with a newly registered domain that had no inbound links.

Leading to results like this:

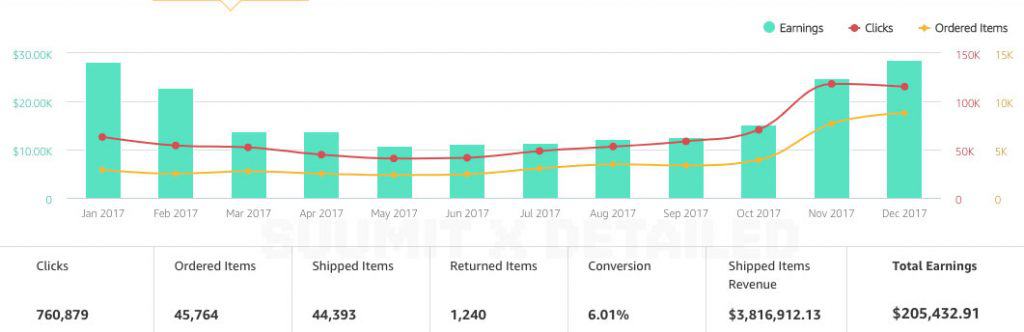

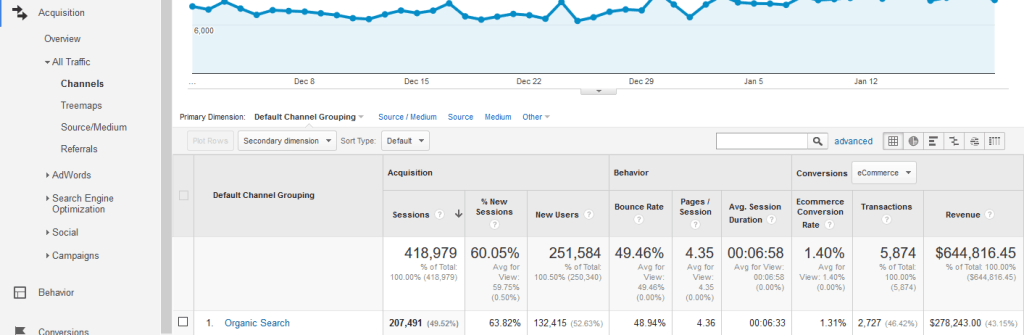

And traffic like this:

Click through and check out the post for all the details–it’s pretty amazing.

A Recent Core Algorithm Update

https://www.seroundtable.com/google-algorithm-update-25384.html

Did your site’s organic traffic get its ass kicked or start kicking ass lately? It may be due to a recent Google algorithm update. SE Roundtable posted about a bunch of chatter they started seeing on various SEO and webmaster forums about a possible update.

Shortly thereafter, Google actually confirmed the update (which they don’t always do).

Each day, Google usually releases one or more changes designed to improve our results. Some are focused around specific improvements. Some are broad changes. Last week, we released a broad core algorithm update. We do these routinely several times per year….

— Google SearchLiaison (@searchliaison) March 12, 2018

So, as this was a core algorithm update, I haven’t seen much in the way of “these sites took a hit because of this reason” type of info, but it also seems to surface a few weeks after the fact, so I’ll keep my eyes open and post an update in a future This Week in SEO post to let you know.

The Chrome Browser Says: Not Secure

https://domainnamewire.com/2018/02/09/google-upping-ante-ssl/

Imagine you’ve put all this hard work and effort into building a site, ranking it well for a bunch of sweet keywords, and… an no one stays on your site for more than 10 seconds.

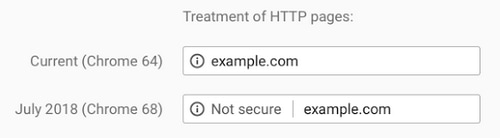

In an upcoming edition of Google’s Chrome browser, all websites without an SSL certificate will be marked “not secure.” Like this:

Starting in July, the latest version of Chrome will show the second notification for sites that don’t have SSL even if someone is not inputting information into a form field.

Why this matters to your SEO:

- People are going to see “not secure” and thing your site will infect their computer.

- They’ll immediately go back to Google and Google will think “damn, people aren’t staying on this page a long time. I guess it’s not relevant.“

- Google will push your page further down the rankings because user-experience shows it’s not relevant.

- Your rankings go down.

It’s an easy fix, and you’ve got a few months to get it done, so…

Google Removes 96/101 GMB Reviews

Damn, Google!

So here’s a bit of local SEO drama for you (and a very costly lesson for you to learn from).

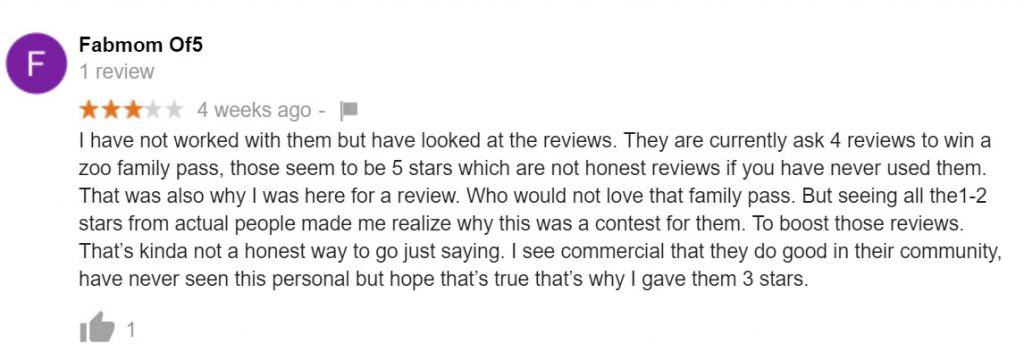

Someone complained on the Google My Business advertising forum that a competing law firm was incentivizing reviews by offering a free pass to a zoo reward, to be chosen from people that left them a review.

Here is a review from someone complaining about the practice:

Of course, most attorneys in the area have a few reviews which is totally normal. Almost all of their fake reviews came in at the exact same time 25-30 days ago. Although, there is a new push with more reviews popping up today, and there’s a batch that was all left on the same day 6mos ago.

They should have under 10 reviews at the very most.

Eventually, the law firm in question chimes in several times on the thread (with some super lawyer-y lingo like “pursuant” and “to the extent”) to say that they are not offering their services in return for reviews, and so they are not in violation of the guidelines.

I definitely recommend reading the full thread, but here’s how it ends (and this is the part you really should internalize):

Google’s team decided that the reviews WERE, in fact, against their guidelines:

“Reviews are only valuable when they are honest and unbiased. (For example, business owners shouldn’t offer incentives to customers in exchange for reviews.) Read more in our review posting guidelines. If you see a review that’s inappropriate or that violates our policies, you can flag it for removal.

And here’s how it all shook out:

Ouch.

Another Algorithm Update (Probably!)

https://www.seroundtable.com/november-google-update-24720.html

Eventually these won’t be newsworthy anymore with the frequency they keep happening…

Okay, probably not. It’s always a big deal when Google drops an algorithm update like a diss track aimed at your website.

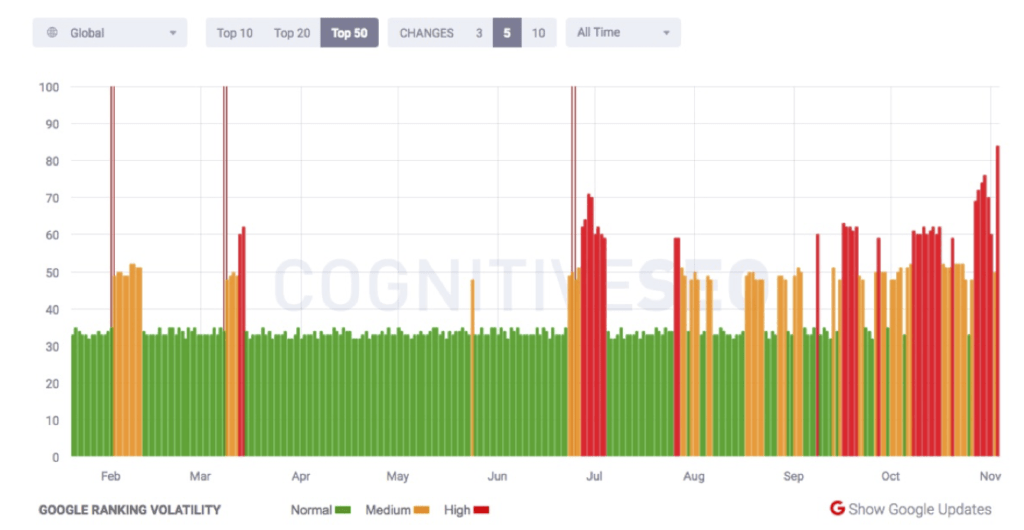

I am seeing signs both within the search community and from the automated tracking tools of an update with Google’s search results going on right now. The interesting thing is about 50% of the tools are reporting on the algorithm update and the other are not. Maybe Google is doing a 50/50 test on a new algorithm?

SERP/algorithm tracking site evidence:

What the F Has Been Going on the SERPs Lately?

https://www.gsqi.com/marketing-blog/the-hornets-nest-fall-2017-google-algorithm-updates/

Basically:

Since August, we’ve seen a number of updates I would call significant. I actually can’t remember seeing that many substantial updates in such a short period of time.

This has been clear from the thousands of keywords we track here at Supremacy. While we’re used to seeing the occasional turbulence in the SERPs, rankings across the last couple of months have been more like paint on a speaker in slow motion:

So why the recent volatility (not even accounting for the recent mobile stuff)? Glenn Gabe takes a very smart—and well informed—stab at it in this post. There’s a number of points he makes, and I highly recommend you go through and read them all, but here’s the most likely terrifying explanation:

Google may be increasing the frequency of refreshing its quality algorithms. And the end goal could be to have that running in near real-time (or actually in real-time). If that happens, then site owners will truly be in a situation where they have no idea what hit them, or why.

Or, you could do something about it:

Based on the volatility this fall, and what I’ve explained above, I’m sure you are wondering what you can do. I’ve said this for a while now, but we are pretty much at the point where sites need to fix everything quality-wise. I hate saying that, but it’s true.

Thoughts on the August Algorithm Update

http://www.gsqi.com/marketing-blog/august-19-2017-google-algorithm-update/

Google has no chill. I’ve seen a ton of movement in the SERPs this summer than I can remember in any previous quarter. And it’s all, as far as I can tell, been tied to a site’s quality score.

As I mentioned in my post about the May 17, 2017 update, Google seems to be pushing quality updates almost monthly now (refreshing its quality algorithms). That’s great if you are looking to recover, but tough if you’re in the gray area of quality and susceptible to being hit. Over the past several months, we have seen updates on May 17, June 25, July 10, and now August 19. Google has been busy.

There seems to have been another one in early September (around the 7th or 11th).

Lots of rankings doing this:

and this:

And a few killer sites doing this:

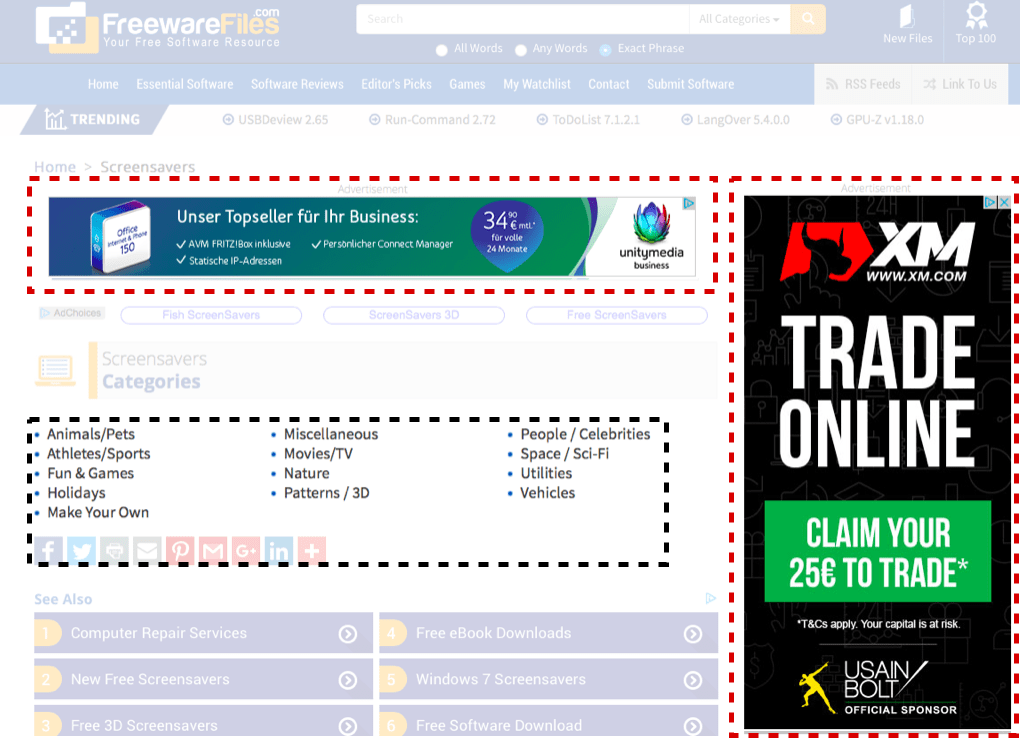

The post goes in-depth to some examples of what may have caused these pages to be impacted (the usual suspects like thin content, lots of ads, and this):

It never ceases to amaze me how some sites absolutely hammer their visitors, attempt to trick them into clicking ads, provide horrible UX barriers, and almost give them a seizure with crazy ads running across their pages.

A good post to dig into and follow the advice for your site. It’s only going to get worse for your SEO if visiting your site is a UX disaster.

Auto-playing Video Ads in Google’s SERPs

http://searchengineland.com/google-confirms-testing-auto-playing-videos-search-results-279533

Google has confirmed with Search Engine Land that they are running a small experiment where they auto-play videos in the search results page. Jennifer Slegg spotted the test this morning after conducting some test searches using Internet Explorer. The video in the knowledge panel will auto-play if you are in this experiment.

And you were mad when they reduced the local pack from 7 to 3…

Big SERP Shakeup

It’s been a damn interesting week.

How’s your organic traffic doing?

It looks like there’s been a very significant Google algorithm update that started rolling out around June 23rd.

Here’s a couple of questions you may be wondering about, and my answers:

Q: What is this? Panda? Penguin? Hummingbird? Pigeon?

A: ¯\_(ツ)_/¯ You see, SEO moves slowly, in general, and it takes time to study the data, to see common site elements that trend down, others the trend up, and really put the pieces together.

Q: Should I panic?

A: No. Probably not. I’ve seen many situations where a site owner has done more damage by trying to disavow a ton of links, 301 redirect a ton of others, and just generally muck-up the site’s architecture trying to get some rankings back. It’s better to take action once you understand the situation better.

I’ve also seen sites that get knocked down by a penalty and, when the dust settles, rank slightly higher than they did after a week of poor rankings. The algorithm can take a while to roll out, and aftershocks can further change things around. So don’t panic!

Here’s some more info on this algorithm update.

Search Engine Land Covering the Possible Update

http://searchengineland.com/google-algorithm-update-rolling-since-june-25th-277942

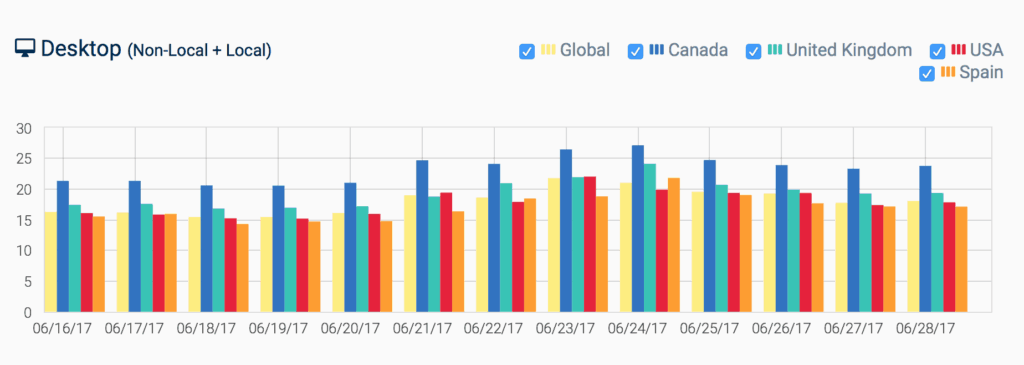

Google technically did not confirm it, outside of John Mueller’s typical reply to any questions around an algorithm update. But based on the industry chatter that I track closely and the automated tracking tools from Mozcast, SERPMetrics, Algoroo, Advanced Web Rankings, Accuranker, RankRanger and SEMRush, among other tools, it seems there was a real Google algorithm update.

Rank Ranger’s Early Coverage of the 2017 June Update

https://www.rankranger.com/blog/google-algorithm-update-june-2017-explained

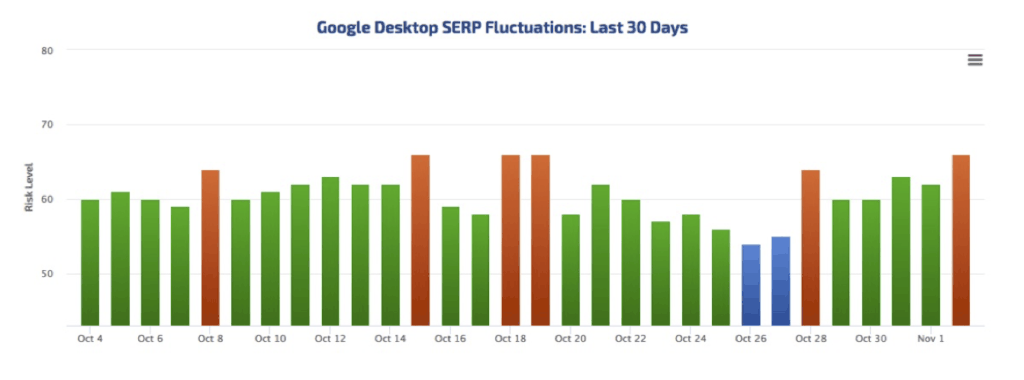

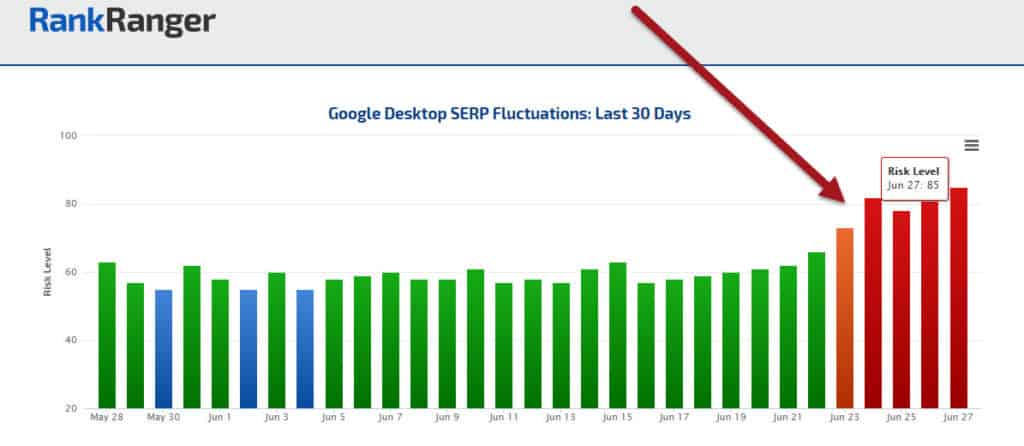

Despite the length of the current update, the initial chatter, per Barry Schwartz of SERoundtable, was quite light. This is obviously peculiar, not only in light of the length of the update, but the fluctuation levels themselves as well. The risk levels on our Rank Risk Index have risen above moderate, and show a continuous series of high fluctuation levels.

SERPWoo: A Bump in Volitility

https://www.serpwoo.com/stats/volatility/

SERPWoo tracks how much a particular niche fluctuates among the top 20, and then aggregates that data across several different verticals like mobile, desktop, search volume, etc.

You can definitely see a bump around the 23rd of June.

Oh Yeah, There Was a Sizable Update in May, Too

http://www.gsqi.com/marketing-blog/may-17-2017-google-algorithm-update/

Because two algorithm updates are better than one!

After digging into many drops, I saw the usual suspects when it comes to “quality updates”. For example, aggressive advertising, UX barriers, thin content mixed with UX barriers, frustrating user interface problems, deceptive ads, low quality content, and more.

Google’s Patent on Finding Authoritative Sites for the SERPs

http://www.seobythesea.com/2017/05/how-does-google-look-for-authoritative-search-results/

No, Siri, I said ‘find authority pictures–you know what? Nevermind’

Apparently Google looks for authoritative pages the same we you probably already do when doing research:

A patent granted to Google this week focuses upon authoritative search results. It describes how Google might surface authoritative results for queries and for query revisions when there might not results that meet a threshold of authoritativeness for the initial query. Reading through it was like looking at a mirror image of the efforts I usually go through to try to build authoritative results for a search engine to surface.

Very interesting stuff. If you’re feeling particularly perky some morning over coffee, I’d suggest giving this patent a read through. Seems likely that you may gain some insight into how Google frames or approaches authority web pages.

Also, go ahead and click through to read the full text of this article. I’m putting two here, but there are seven “takeaways” from the patent that I recommend becoming familiar with:

1. Google might maintain a “keyword-to-authoritative site database” which it can refer to when someone performs a query.

2. The patent described “Mapping” keywords on pages on the Web as sources of information for that authoritative site database.

Finally, this is all further proof that the best long-term SEO strategy is becoming an authority in your space.

How? Call me biased, but getting juicy, high-quality backlinks is part of a balanced SEO breakfast. Check out how our RankBOSS service can help.

Google’s New Algorithm Update Targets Fake News

https://stratechery.com/2017/not-ok-google/

This is a great update on Google’s relationship with, and response to, fake news.

From Bloomberg, on the update:

The Alphabet Inc. company is making a rare, sweeping change to the algorithm behind its powerful search engine to demote misleading, false and offensive articles online. Google is also setting new rules encouraging its “raters” — the 10,000-plus staff that assess search results — to flag web pages that host hoaxes, conspiracy theories and what the company calls “low-quality” content.

It’s always interesting to read about SEO-related issues from non-industry people. In this case, it’s Ben from the (amazing) tech blog Stratechery.

Framing the problem of fake news in relation to Google’s finances:

Google, on the other hand, is less in the business of driving engagement via articles you agree with, than it is in being a primary source of truth. The reason to do a Google search is that you want to know the answer to a question, and for that reason I have long been more concerned about fake news in search results, particularly “featured snippets.”

Google … is not only serving up these snippets as if they are the truth, but serving them up as a direct response to someone explicitly searching for answers. In other words, not only is Google effectively putting its reputation behind these snippets, it is serving said snippets to users in a state where they are primed to believe they are true.

The main criticism here is not in how Google handled the algorithm update, but in how they are changing the quality rater guidelines to now demote pages that it considers “not-authoritative:”

This simply isn’t good enough: Google is going to be making decisions about who is authoritative and who is not, which is another way of saying that Google is going to be making decisions about what is true and what is not, and that demands more transparency, not less.

Dear Google:

Drop Dead Fred: A Google Algorithm Update Analysis

http://www.sistrix.com/blog/googles-fred-update-what-do-all-losers-have-in-common/

Recently, there was a Google algorithm update that clever hilarious SEOs named “Fred” after Gary Illyes said all future updates should be named “Fred.”

As usual, there has been a lot of speculation as to what this update entailed, but nothing super solid.

Recently, though, an SEO tool company called Sistrix has published some interesting findings after studying 300 sites:

Nearly all losers were very advertisement heavy, especially banner ads, many of which were AdSense campaigns. Another thing that we often noticed was that those sites offered little or poor quality content, which had no value for the reader. It seem that many, but not all, websites are affected who tried to grab a large number of visitors from Google with low quality content, which they then tried to quickly and easily monetize through affiliate programs.

According to this post, sites that look like this got hammered:

a.k.a. a page created to grab search traffic, with a low amount/terrible quality content and lots of ads.

Hopefully you’ve been taking our advice and creating solid content on the pages you’re trying to rank. ?

SEO IS DEAD!

searchengineland.com/googles-new-tappable-shortcuts-271690

JK. Any time any little thing happens/goes wrong in this industry, it’s all doom all the time.

But seriously, this probably is a pretty big deal, when combined with the mobile-first index and rise of mobile search:

Tappable shortcuts eliminating the need to search (for certain things)…

The shortcuts eliminate the need to search, providing quick answers around sports scores, nearby restaurants, up-to-the minute weather updates and entertainment information, like TV schedules or who won the Oscar for best supporting actress.

I mean, if you’re in any of those industries (sports scores, weather, etc), Google already ate your lunch and made you buy dessert.

Here’s a video on the new feature:

Google’s Biggest Competition

http://www.lsainsider.com/facebook-inches-closer-to-something-that-looks-like-local-search

Three years ago, if I were to put money on which multi-gajillion-dollar tech company would pose the biggest threat Google’s search dominant, smart money would be on Apple.

But since all Apple has done in the past three years is NOT innovate Siri and make laptops with features no one asked for (I’m not bitter, YOU’RE bitter), it’s Facebook that’s stepping up it’s game.

Yesterday TechCrunch wrote about the test of an “enhanced local search feature” on Facebook. It’s an expanded version of Nearby Places: e.g., “coffee nearby.” It’s difficult to tell precisely what’s new here. However TechCrunch says the following: it’s “a list of relevant businesses, along with their ratings on Facebook, a map, as well as which friends of yours have visited or like the places in question.”

Here’s what it looks like:

As the article says, it’s been (and continues to be) a slow, steady ramp up for Facebook, but they’ve got the presence and the data to present a big threat to Google in the near future.

Rapid-Fire SEO Insights

Via @johnmu: Google can recognize keyword stuffing quickly & then can ignore the keyword completely on the website https://t.co/cOy45zJIdT pic.twitter.com/1SvIH3DAnW

— Glenn Gabe (@glenngabe) March 26, 2017

—

https://www.portent.com/blog/ppc/adwords-changing-exact-match-again.htm

Google is changing its definition of “exact match” keywords in Keyword Planner.

The search query “hotels in new york” will be able to trigger an ad impression for the exact match keyword [new york hotels] because the word order and the term “in” can be ignored and not change the intent of the query.

Facebook Video Algorithm Update

https://seo-hacker.com/facebook-changing-rank-videos-advantage/

Yes, sometimes we talk about not-Google!

Here’s a summary of how the new Facebook video update works:

Simply put, your Facebook videos will be ranked according to how long people watch your video. If they watch it into completion, then your page will be rewarded accordingly. Of course, if a majority of the people who watch your video leave it halfway through then your content will be given the appropriate demerits.

Another case of user-egagement/experience being used as a ranking signal, but this time in Facebook.

Expect to see (and probably do this yourself in your videos) Facebook videos starting like this:

Hey, be sure to stick around to the end of this video for

As we’ve seen, when a search engine gives value to a metric, that metric is exploited mercilessly. 🙂

How Hummingbird Works

A super-optimized (for social sharing), in-depth post by Neil Patel(‘s ghost writer).

Hummingbird doesn’t get a lot of mentions in the day-to-day SEO blog circuit. Everybody is all Panda this and Penguin that.

But Hummingbird was a big deal–and still appears to be.

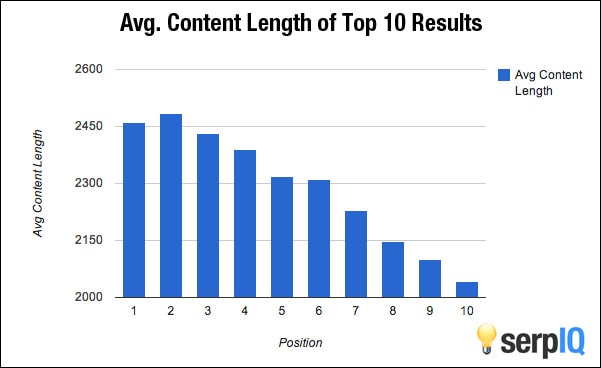

Here’s the list of eight takeaways from the study (most of which we’ve been talking about here, for forever). Check out the post to see the data behind the summary, and some of the individual content analyzed.

However, if you want to skip those weird ads

Here’s the list:

- Select, refine and state your site’s topic using a clear purpose statement, above-the-fold content and specific navigation elements. (Don’t be content with fuzzy or broad statements.)

- Create long form content. (Avoid short content.)

- Create in-depth content. (Avoid generic content.)

- Summarize the purpose and intent of the site with specificity and directness. (Don’t hide your purpose or make it vague.)

- Create content that appeals to readers (Don’t create content for search engines.)

- Create focused content. (Don’t try to provide comprehensive content on every sub niche in your niche.)

- Create a lot of content. (Don’t be happy with a few blog posts or evergreen pages.)

- Create content that is entirely relevant to your area of expertise. (Don’t write about off-topic subjects.)

Me, writing that last post:

Penguin 4.0 is Now Completely Rolled Out

https://www.seroundtable.com/google-penguin-4-rollout-complete-22831.html

Just an FYI — nothing too in-depth here.

Penguin 4.0 is confirmed by Google as having finished rolling out.

It’s like Jay Z sez: If your site’s still penalized I feel bad for you, son

Penguin 4.0 Recovery Case Studies

https://www.mariehaynes.com/penguin-4-0-recovery-case-studies/

Mmmm, case studies. The sustenance of SEOs everywhere.

This case study is done by Marie Haynes, and focuses on sites that were previously Google-slapped (Penguin punched?) and have recently recovered.

Lots of examples like this:

Hit by Penguin: This site was suppressed by a manual action for unnatural links several years ago. While they have made some improvements since then, I have always felt that they were still somewhat suppressed and have told them that they likely would see some improvement when Penguin finally updated.

Why? Large number of keyword anchored paid links as well as directory submissions.

What was done to attempt recovery? We did a thorough link audit and disavow. Many links were removed. Ongoing link audit and disavow work was done.

Did the site get new links while suppressed? This site has been working with a good SEO company and has managed to gain a good number of new links and also to continually improve their on-site quality.

Basically, thorough link audits + some disavows + new, strong links = the recipe for recovery (apparently).

Possum = “Near Me” Update?

http://www.localseoguide.com/possum-near-update/

While Penguin is still rolling out, just starting to un-kill sites that got slapped by version 3, the beginning-of-September local update (that lots of people are calling Possum) had some very real, very big consequences (both good and bad) for many local sites.

LocalSEOGuide looks at the impact of this update on “near me” queries (such as “Apple store near me,” “pizza near me,” etc.).

The findings?

Sites targeting “near me” searches saw a big boost, and the update seemed to be targeting sites that were using some SPAMMY techniques (which is a relative definition, I know) to rank locally.

While we didn’t see this in every case, strong local search domains that have been using this brand near me strategy appeared to start to be more relevant to Google for these queries. While the site in question is a nationally-known brand, we even saw this kind of activity on some of the smaller, far less well-known local search clients we work with.

The Quality Update; Not Penguin or Panda, but Still Important

There are more things to fear than just Pandas and Penguins.

So, while many still focus on Google Panda, we’ve seen five Google quality updates roll out since May of 2015. And they have been significant. That’s why I think SEOs should be keenly aware of Google’s quality updates, or Phantom for short. Sometimes I feel like Panda might be doing this as Google’s quality updates roll out.

This article focuses on the Phantom/Quality update, and why this algorithm update should be on your radar.

Short answer: because it can F your S up.

Click through to get a solid foundational understanding of Phantom/Quality updates from Glenn Gabe, one of my favorite SEO authorities.

An Update on Doorway Pages

https://webmasters.googleblog.com/2015/03/an-update-on-doorway-pages.html

Google is coming after your crappy-user-experience-created-only-for-SEO doorway pages (again).

From Google’s official site:

Over time, we’ve seen sites try to maximize their “search footprint” without adding clear, unique value. These doorway campaigns manifest themselves as pages on a site, as a number of domains, or a combination thereof. To improve the quality of search results for our users, we’ll soon launch a ranking adjustment to better address these types of pages. Sites with large and well-established doorway campaigns might see a broad impact from this change.

The post has a helpful list of things to check to make sure you are not using doorway pages, so definitely give that a run through, if you’re unsure.

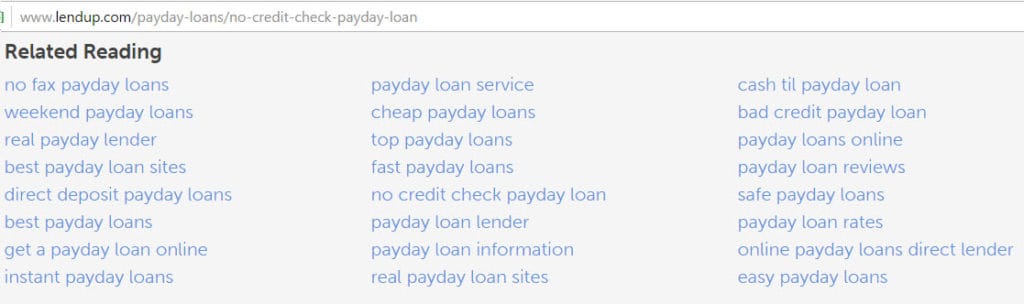

Funny thing, and a call-back to the SEObook post mentioned above: here’s a solid example from the Google Ventures-funded LendUp of exactly what doorway pages look like on a site:

LendUp currently ranks extremely well for all of those pages, if you’re wondering.

¯\_(ツ)_/¯

Official Google Updates

Guidelines for bloggers who review products they receive for free

https://webmasters.googleblog.com/2016/03/best-practices-for-bloggers-reviewing.html

Google has caught on to your link-building schemes. No longer can you send beef jerky or facial scrub to review sites in return for that sweet, sweet DoFollow link.

New guidelines now indicate you must:

- Use NoFollow links

- Disclose the relationship

- Write compelling, unique content

As a form of online marketing, some companies today will send bloggers free products to review or give away in return for a mention in a blogpost. Whether you’re the company supplying the product or the blogger writing the post, below are a few best practices to ensure that this content is both useful to users and compliant with Google Webmaster Guidelines.

The Webmaster Blog has a new domain name

https://webmasters.googleblog.com/2016/03/an-update-on-webmaster-central-blog.html

You can find the new site at webmasters.googleblog.com, instead of the old address: googlewebmastercentral.blogspot.com.

Why?

…starting today, Google is moving its blogs to a new domain to help people recognize when they’re reading an official blog from Google. These changes will roll out to all of Google’s blogs over time.

Even GOOGLE doesn’t use blogspot anymore…

How Google Prioritizes Spam Reports

http://www.thesempost.com/google-prioritizes-spam-reports-from-those-with/

Google prioritizes spam reports sent within Search Console (then those submitted elsewhere. Google also prioritizes acting upon spam reports if it comes from a source that has previously submitted spam reports that have been helpful in cleaning up legitimate spam (instead of just being bitchy to competitors).

If you submit spam reports to Google, especially for spam within your niche, it would be more beneficial to you if you really do help clean up the space from spam by submitting valid reports, and not just randomly reporting competitors for tiny almost non-existent tiny violations.

AI is Transforming Google Search

http://www.wired.com/2016/02/ai-is-changing-the-technology-behind-google-searches/

TL;DR — The head of AI at Google is now the head of search, and with their increased adoption of deep learning neural networks to drive search rather than algorithms, maybe we can learn to love penguins and pandas again (and start hating on robots).

Yes, Google’s search engine was always driven by algorithms that automatically generate a response to each query. But these algorithms amounted to a set of definite rules. Google engineers could readily change and refine these rules. And unlike neural nets, these algorithms didn’t learn on their own. As Lau put it: “Rule-based scoring metrics, while still complex, provide a greater opportunity for engineers to directly tweak weights in specific situations.”

But now, Google has incorporated deep learning into its search engine. And with its head of AI taking over search, the company seems to believe this is the way forward.

Google Manipulates Search Results

http://recode.net/2015/06/29/yelp-teams-with-legal-star-tim-wu-to-trounce-google-in-new-study/

A study has come out, sponsored by Yelp, claiming that Google is manipulating its search results to favor its own web properties, presenting users with a poorer end product.

“The easy and widely disseminated argument that Google’s universal search always serves users and merchants is demonstrably false,” the paper reads. “Instead, in the largest category of search (local intent-based), Google appears to be strategically deploying universal search in a way that degrades the product so as to slow and exclude challengers to its dominant search paradigm.”

And here’s the best quote from the report that was published:

The results demonstrate that consumers vastly prefer the second version of universal search. Stated differently, consumers prefer, in effective, competitive results, as scored by Google’s own search engine, than results chosen by Google. This leads to the conclusion that Google is degrading its own search results by excluding its competitors at the expense of its users. The fact that Google’s own algorithm would provide better results suggests that Google is making a strategic choice to display their own content, rather than choosing results that consumers would prefer.

I don’t have to point out that Google is possibly getting… “penalized” …for manipulating the search results, do I? Because, that’s kind of hilarious…

Amid the antitrust suit in Europe, Google is not having the best time right now.

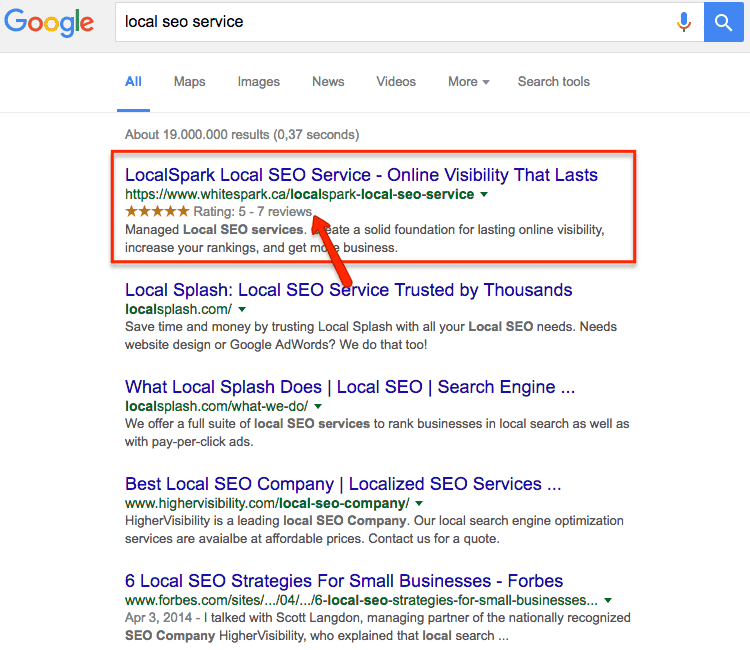

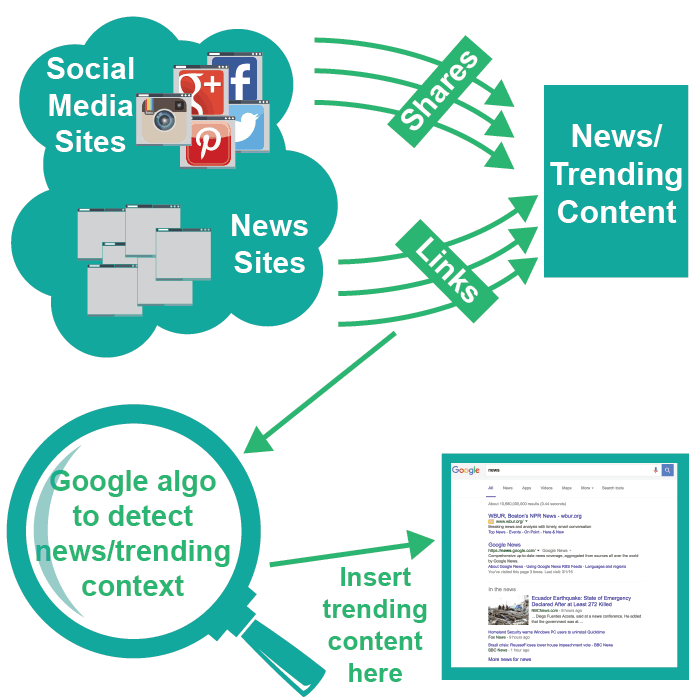

New Google Algorithm: the “Newsworthy Update.”

http://searchengineland.com/report-last-weeks-google-update-benefited-news-magazine-web-sites-223717

Last week saw a new Google update, not related to any of their recurring algorithm updates. This update, being unofficially called the “Newsworthy Update,” boosted SERP visibility for many sites that cover fresh, news-related content.

Check out Search Engine Land for the full story.

Google Wants to Rank Websites Based on Facts not Links

Google is seeking to rank websites based on factual information, rather than skewed towards sites with the higher number of incoming links, as it has in the past. They are working on having their Knowledge-Based Trust score assess the number of incorrect facts on a page, and deem websites with the least amount of incorrect facts as most trustworthy. Apps currently exist today that perform similar assessments, like weeding out spam e-mails from your inbox or pulling rumors from gossip websites to verify or denounce them.

From the article: “A Google research team is adapting that model to measure the trustworthiness of a page, rather than its reputation across the web. Instead of counting incoming links, the system – which is not yet live – counts the number of incorrect facts within a page. “A source that has few false facts is considered to be trustworthy,” says the team. The score they compute for each page is its Knowledge-Based Trust score.”

Bringing a Site Back from the Dead

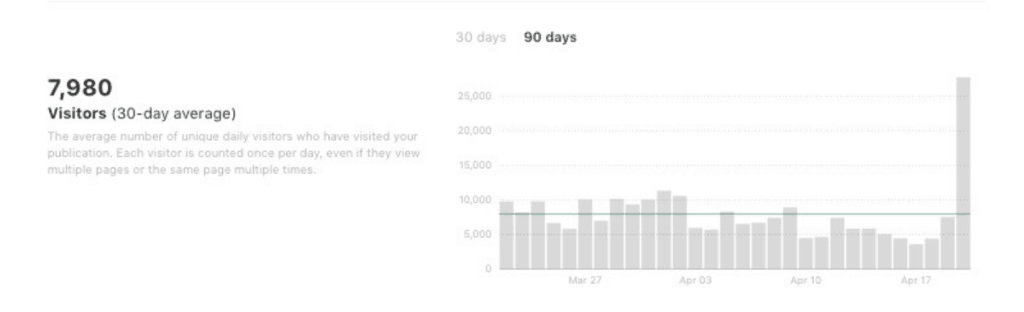

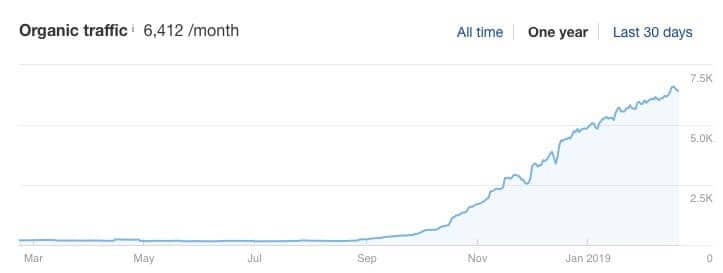

https://www.rankxl.com/reviving-a-penalized-site-to-6400-visits-per-month-case-study/

Good case study here.

Backstory: some guy bought a website but never did anything with it. Fast forward a few neglected years and leading up to Q4 2018 the site was also pretty abandoned by visitors and getting no love from Google.

This post covers what the author did to re-tafficize™ the site, including

- fixing thin content

- updating good articles

- disavowing links

Since this website wasn’t really worth much we decided to experiment and have a plan consisting of 2 parts.

Step 1. Check the website and delete all the content which wasn’t ranking or content that was considered as thin or low quality in the eyes of Google. Same for low quality backlinks.

Step 2. If the first part gave results (fortunately it did), we could move to the second phase of the plan which was to invest some money in it, write more content and build some high quality backlinks to boost the rankings.

The results:

Sweet.

What it Takes to Rank in Higher Education Niches

https://www.serpwoo.com/blog/analysis/seo-for-higher-education-marketing/

This is a good case study–not just for the takeaways, but for the research process. It seems like a lot of people just want to be told what to do (e.g. sign up for our link building service) instead of researching and understanding their industry, their competitors, and what it takes to rank for their best keywords.

Obviously this is easier to do if you have some SEO skills yourself, but not impossible. In this case study, they crunch some data they get by digging through the sites on page one for various keywords to draw some conclusions:

Our findings show that page one URLs have more nofollow backlinks than page 2-10 URLs.

And while some might scream this is an example of page 1 URLs having more total backlinks overall, and thus some of those will be nofollows.. it doesn’t account for the fact that URLs on page 3 who have a similar number of total backlinks ( but very few nofollow ) aren’t on page 1.

If total backlinks for page 1 URLs and page 3 URLs are similar, but nofollows are less on page 3 and more on page 1 URLs, then you have to give the credit that nofollows ARE helping in rankings.

I’m not trying to start a conversation around the value of nofollow links OR endorse them as the thing your backlink profile is missing, so don’t @ me. I’m just pointing out that this case study is pointing out that there is some interesting data here.

Is it likely that the sites ranking on page one provided some value and that there are other factors accounting for their high rankings, one consequence of which was earning lots of no follow links?

YEAH PROBABLY.

But that’s a signal that you should pay attention, and dig in further. WHY are they getting more nofollows than the page three sites?

That’s just one tiny piece of one data point you can dig in to. Check out the whole study to get a sense of the process and data a professional SEO looks at when trying to rank a site.

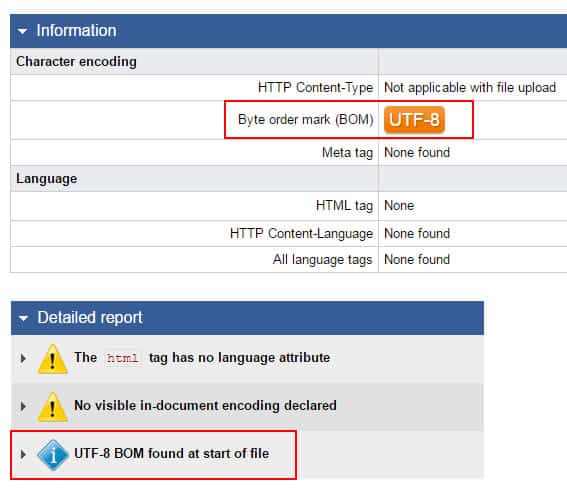

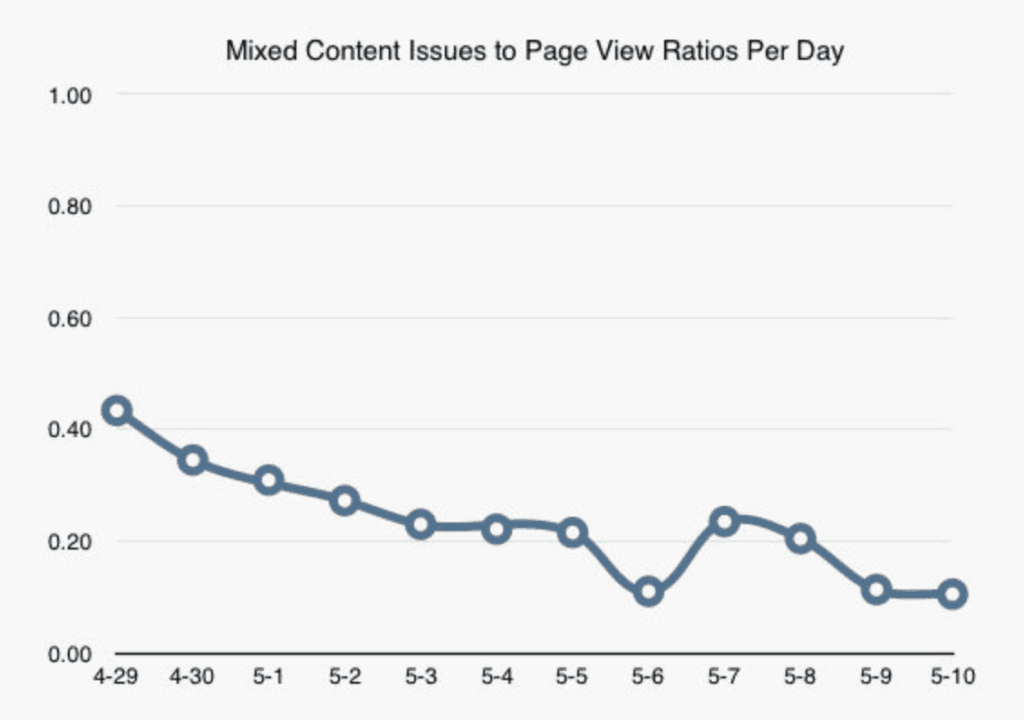

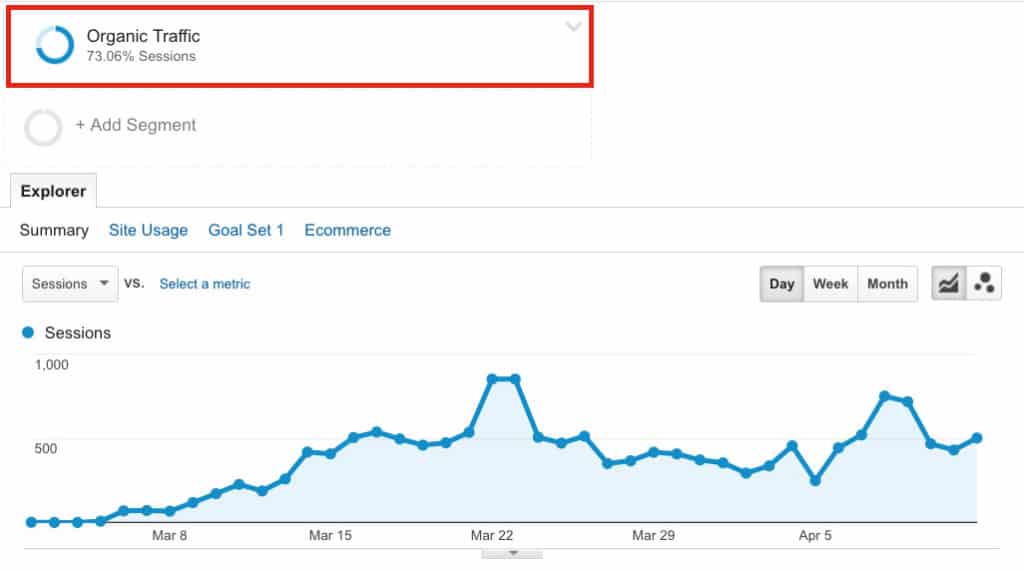

Addressing and Ignoring Technical SEO

https://ftf.agency/technical-seo-case-study/

This is actually a post from 2015 with a few updates and a new published on date so I’m gonna including it here to a) stick with the case study theme, and b) it’s genuinely interesting and important content.

Technical SEO.

By fixing some pretty terrible technical SEO issues (and leveraging “traffic leaks”) Nick was able to grow the site from 7-8,000 visits per day to an average of 25,000.

Key takeaways:

- Leverage your site’s internal link equity and your strongest page’s citation flow. Use this to your full advantage to send link equity to the pages that need it most.

- Optimize your site’s crawl budget and make sure you’re not wasting Googlebot’s time crawling pages with thin or near-duplicate content.

- Don’t underestimate the power of properly built and optimized sitemaps, and make sure you’re submitting them regularly through GSC.

- Put in the work to engineer a few big traffic pops, while they may only be a flash in the pan in terms of growth; the sum of these parts will create a larger whole.

The updated part of this post mostly relates to this graph:

Which shows that, when the site was sold shortly after the work described here, but new owners neglected on-page SEO pretty badly.

Why Google Doesn’t Use CTR and E-A-T Like You Think

An SEO think-piece (rant) on how many SEOs are full of shit and correlation does not imply causation, and how, with cherry-picked data, some SEOs spread misinformation that refuses to die because it gets caught in viral feedback loop.

Google does NOT use E-A-T as a ranking signal.

It doesn’t matter how often Googlers reject that belief. It doesn’t matter how much they debunk it. The same people come back time and again and proclaim their correctness, asserting they finally have proof that the E-A-T- Fairy is real and bestowing blessings upon everyone.

Wikipedia alone proves there is no E-A-T ranking signal. Wikipedia is not only NOT an expert Website, the news media has published many stories about subject-matter experts being driven away from Wikipedia. Although Wikipedia’s community has responded to these criticisms and attempted to adjust their policies through the years to promote better editing, their fundamental principle of allowing consensus from the unwashed masses to make decisions has led to many potentially good articles being edited into mediocrity. In some cases outright false information persists in Wikipedia articles because their rules against “edit wars” favor the people who are clever enough to revert accurate corrections.

You’ll most likely have a strong reaction to this post–whether for or against, but that’s a good thing. On the one hand, it’s important to try and validate on your own the kinds of things some very visible SEOs claim. On the other, Google has zero interest in having any of us really understand their algorithms work (outside of making sure they can be easily crawled for maximum indexing and scraping, but that’s a story of another day).

So, I mean, you’ve got to accept SOME advice eventually. Probably helpful to read something from both sides of the SEO aisle as much as possible. For every Marie Haynes theory read something from Michael Martinez.

Then start drinking…

Breaking Down Search Intent

https://www.contentharmony.com/blog/classifying-search-intent/

Search intent has always been important, but it really stepped into the spotlight in Q2/Q3 2018 when the “medic update” shook up the SERPs in a big way. Now, it pays not just to consider the search intent (which was best practices pre-Medic), but to constantly evaluate what Google considers the intent to be.

This post breaks down intent even further than the traditional buyer vs. informational and has some really smart things to say overall.

For instance, breaking down search intent classifications even further to pull “answer intent” from the broader “informational:”

Slightly different from research, there are quite a few searches where users don’t generally care about clicking into a result and researching it – they just want a quick answer. Good examples are definition boxes, answer boxes, calculator boxes, sports scores, and other SERPs that feature a non-featured snippet version of an answer box, as well as a very low click-through rate (CTR) on search results.

Highly recommended read–and apparently they have a tool coming out that helps identify search intent by keyword.

Does AMP Give SEO Ranking Benefits?

https://www.stonetemple.com/amp-impact-on-rankings-conversions-engagement/

Google says AMP carries no SEO advantage, but Google says a lot of things…

What does the data say, though?

Stone Temple took a(n admittedly small) sample of AMP sites and ran some numbers:

Overall, 22 of the 26 websites (77%) experienced organic search gains on mobile. Other areas of improvement include SERP impressions and SERP click-through rates. A summary of the results across all 26 sites is as follows:

27.1% increase in organic traffic

33.8% increase in SERP impressions

15.3% higher SERP click-through rates

But were there rankings gains?

Yes, kind of–but the study’s author is reluctant to say it was due to AMP:

what we saw was that 23 of the 26 domains saw an increase in our Search Visibility score. 17 of these domains saw an increase of 15% or more, and the average change in Search Visibility across all 26 sites was a plus 47%.

This data suggests that there were some rankings gains during the time period. So that still leaves us with the question as to whether or not having an AMP implementation is a direct ranking factor. In spite of the above data, my belief is that the answer is that it’s not.

Check out this one for the full scope of the study.

Are Web Directories Still Relevant for SEO in 2019?

https://cognitiveseo.com/blog/21291/web-directories-seo/

If they are high quality and relevant, yes.

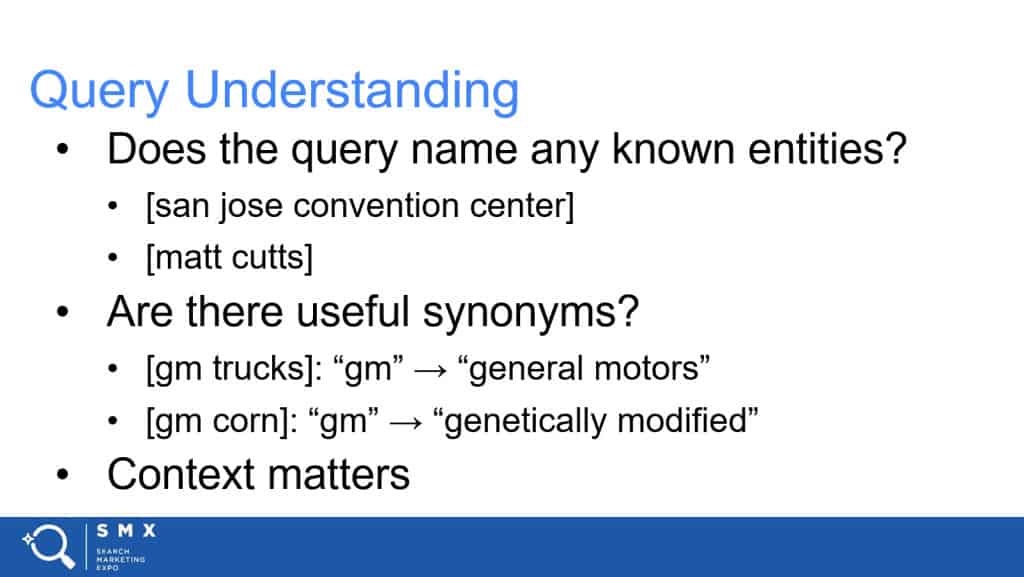

Understanding Query Syntax

http://www.blindfiveyearold.com/query-syntax

This is a very smart post for advanced SEOs only. I mean, go read it if you’re not an SEO but you’ll be like:

This is another post, essentially, on search intent:

It’s our job as search marketers to determine intent based on an analysis of query syntax. The old grouping of intent as informational, navigational or transactional are still kinda sorta valid but is overly simplistic given Google’s advances in this area.

Knowing that a term is informational only gets you so far. If you miss that the content desired by that query demands a list you could be creating long-form content that won’t satisfy intent and, therefore, is unlikely to rank well.

Query syntax describes intent that drives content composition and format.

I’m not gonna attempt to summarize this post here, but instead I will encourage you to go read the whole post.

Topic Clusters

https://alfredlua.com/seo-topic-clusters/

Lots to focus on with content this week.

This post, by the Growth Editor at Buffer.com will be most helpful to you if you’re just about to do a big content push, and you’re looking for a little direction.

Similar to silo-ing pages, this post explores how some of the content at Buffer is created and structured on the site.

So far, I have seen two styles for the main page. The first is a pillar page — a long-form guide, often known as an “ultimate guide” that covers the topic comprehensively. The second is a hub page — something like a table of contents with a summary of each sub-topics and a call to action to read the respective supporting articles. We have tried both at Buffer (pillar page example and hub page example). To determine which style to use, I like to search for the topic on Google and see which style most of the top 10 articles use.

It also covers some of the mistakes they’ve made, which is helpful to see:

A highly recommended read.

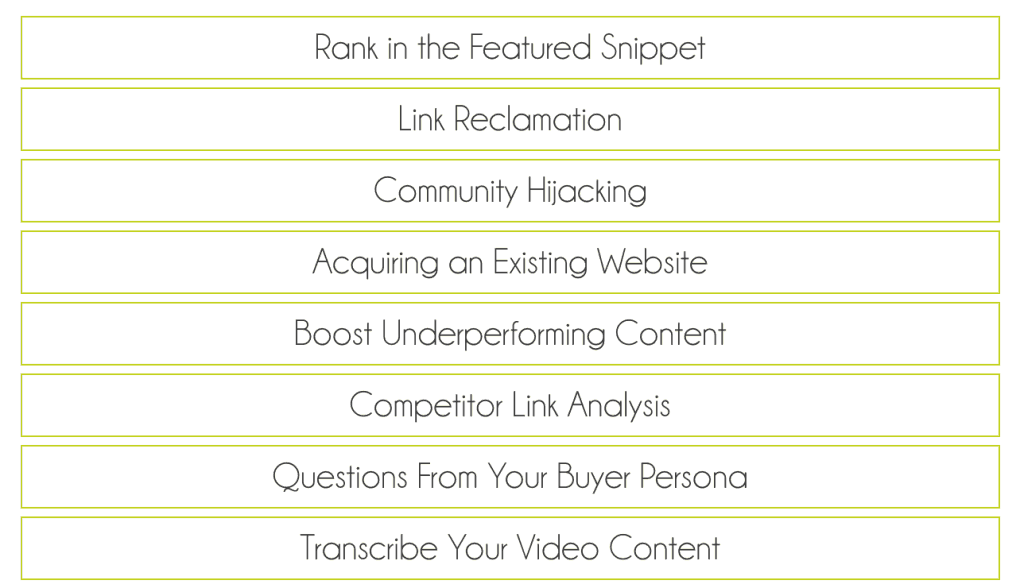

Featured Snippets FTW

https://www.orbitmedia.com/blog/featured-snippets/

If you can’t beat ’em, join ’em. If you can’t get them to send traffic to your high quality, relevant content, win the featured snippet so at least it’s your content they’re displaying.

…not quite as catchy.

Every year there are reports of Google scraping data from websites (against Google’s webmaster guidelines if YOU do it) and displaying it in various feature-rich SERPs, saving the searcher a click. While the featured snippets decrease the amount of clicks the standard organic-10 results get, there’s nothing you can really do about that except try and get the featured snippet in addition to the best organic spot.

Not sure where to start?

Orbit Media’s post will get you most of the way there:

If your content gets a featured snippet for one search query, the chances to win featured snippets for other similar ones are great enough. As your site already has an appropriate structure to provide a quick answer, it’s more likely Google will consider it to be a great option for the related queries.

Expired Domain SEO [Case Study]

https://detailed.com/expired-domain-seo/

Another great post from Glen Allsopp (outting as per usual).

This one is about a close-up look at how entrepreneur in India is making 5 figures per month with an affiliate site–specifically using expired domain redirects as a way to build links/pass on authority.

Of course, this is nothing new to SEO veterans, but if it’s a new idea to you, give it a read. The post also had a few reactions from SEO professionals which was an interesting addition.

The site is a pretty amazing anomaly if you ask me. The design is as basic as it gets, the content is thin – has no tone or legitimacy, and the internal links are partial match at best.

Yet the site has made strategic use of some old school link building techniques that definitely still work and managed to rack up over 2,300 RD’s in Ahrefs and rank for over 28k keywords, with nearly 1,800 ranking on the first page of Google. Most of these terms are commercial intent terms with several thousand searches per month each.

It’s a great way to get some insights into what a successful site owner is doing to generate traffic in an honest way, without an info product attached to it.

Just How Big Your Website Actually Is

https://www.gsqi.com/marketing-blog/how-to-find-true-size-of-your-site-index-coverage-reporting/

Size of your site != how many pages are indexed.

This is definitely getting into the nerdier side of things, but understanding this can be really important to your SEO efforts.

…crawl budget might also be a consideration based on the true size of your site. For example, imagine you have 73K pages indexed and believe you don’t need to worry about crawl budget too much. Remember, only very large sites with millions of pages need to worry about crawl budget. But what if your 73K page site actually contains 29.5M pages that need to be crawled and processed? If that’s the case, then you actually do need to worry about crawl budget. This is just another reason to understand the true size of your site.

This is a pretty thorough post, taking you through all the reasons why understanding your site’s size is important, the different ways your site could get out of control, and how to reign it all in.

Good stuff, highly recommended.

E-A-T is Not a Search Algorithm

http://www.blindfiveyearold.com/algorithm-analysis-in-the-age-of-embeddings

It’s almost the Thanksgiving holiday here in the US, so I’ll start by saying how thankful I am for this post, which is kind of the culmination of a lot of I’m-just-guessing-but-take-this-as-gospel SEO advice-giving lately.

When the August 1st update hit, many people had never seen anything like it. Even battle-hardened SEOs were kind of shocked at what they were seeing. Like anyone would when the metaphorical rug is yanked out from under them, people whose sites got smashed down in the SERPs looked to whatever confident voice they could find and held on for dear life.

The problem with proclaiming why a Google algo update happened and how to fix it, as this post shows, is that it takes a long time to sort through the data–or even a long time to collect the data.

It can be hard to do nothing when your traffic is down 75% over night, but it’s even worse to make a bunch of changes to your site before you understand why you lost that traffic in the first place.

I have seen a lot of “experts” talking about how E-A-T was being more heavily weighted with this new update, but E-A-T is not part of an algorithm:

The problem is those guidelines and E-A-T are not algorithm signals. Don’t believe me? Believe Ben Gomes, long-time search quality engineer and new head of search at Google.

“You can view the rater guidelines as where we want the search algorithm to go,” Ben Gomes, Google’s vice president of search, assistant and news, told CNBC. “They don’t tell you how the algorithm is ranking results, but they fundamentally show what the algorithm should do.”

So I am triggered when I hear someone say they “turned up the weight of expertise” in a recent algorithm update. Even if the premise were true, you have to connect that to how the algorithm would reflect that change. How would Google make changes algorithmically to reflect higher expertise?

Google doesn’t have three big knobs in a dark office protected by biometric scanners that allows them to change E-A-T at will.

So what did change with these recent big updates?

To put it as succinctly as possible: Google’s algorithms reassessing search intent.

There’s no way I can sum up this post in a few paragraphs. This post is what I mean when I say “good SEO sleuthing takes time,” (ok I’ve actually never said the word “sleuthing”). It takes hours and hours to sort through the SERPs and the new rankings–that’s why there’s no “state of the algorithm update” post right when it happens–or at least, there shouldn’t be.

If you read only one post when you’re trippin on tryptophan this Thursday, make it this one.

A Different Kind of International SEO

https://www.searchenginejournal.com/google-algorithm-loopholes/278093/

So, a Facebook group had a contest to rank a new site for “Rhinoplasty Plano.”

The runner up (2nd spot) was a site entirely in Latin.

Yes, Latin, the dead language spoken by the Romans and, one assumes, inspiration for Pig Latin.

So how the hell did someone rank a site entirely in Latin when Google is so smart and has invested in neural networks to have their algorithms reward relevant sites and etc. etc. etc.?

Position two is held by a site written almost entirely in Latin, Rhinoplastyplano.co. It mocks everything Google says about authority and quality content. Google ranking a site written in Latin is analogous to the wheels falling off a car.

Basically, they did all the things you’re supposed to do to a local site: map on the website, schema mark-up, optimized the page, etc. Just… with Latin content.

They say their intent wasn’t to shame Google or anything but… it’s pretty bad optics for Google to keep talking about how much content matters and build a quality site with good content, it’s all that matters, and then a site in Latin hits the top spot…

Pretty entertaining story. Check out both URLs above for the full take.

Search Clicks > Search Volume

https://www.siegemedia.com/seo/search-clicks

Siege Media, content SEO geniuses, have put together a video on why you shouldn’t just focus on searches per month, but rather, focus on search clicks (a metric that’s easy to see using Ahrefs.

Really good stuff.

Is Your Site an Endless Horror for Googlebot?

https://www.contentkingapp.com/academy/crawler-traps/

Search engine spiders don’t like a site that plays hard to get. If they have to put too much effort into crawling your site they just… won’t crawl all of it.

I think we’re all on the same page here in wanting the best experience possible for users AND Google on your site, so making sure there aren’t any tricky spots where the crawler could get and give up.

So, how to prevent this?

Definitely dig into the article, but here are the likely culprits:

- URLs with query parameters: these often lead to infinite unique URLs.

- Infinite redirect loops: URLs that keep redirecting and never stop.

- Links to internal searches: links to internal search-result pages to serve content.

- Dynamically generated content: where the URL is used to insert dynamic content.

- Infinite calendar pages: where there’s a calendar present that has links to previous and upcoming months.

- Faulty links: links that point to faulty URLs, generating even more faulty URLs.

If your site possibly has some of the above situations, it’s probably worth digging in to make sure you’re not killing your crawl budget (the links has solutions to fixing all the above-mentioned list).

Backlinko Explains Search Intent

https://backlinko.com/skyscraper-technique-2-0

Brian Dean has created a pretty good post explaining the importance of understand query intent (which we covered on our “Medic” core algorithm update post). Calling it Skyscraper 2.0 seems like it needlessly confuses the topic, but whatever, hashtag marketing.

On paper my content had everything going for it:

- 200+ backlinks.

- Lots of comments (which Google likes).

- And social shares out the wazoo.

What was missing? User Intent.

User Intent + SEO = Higher Rankings

Remember:

Google’s #1 goal is to make users happy. Which means they need to give people results that match their User Intent.

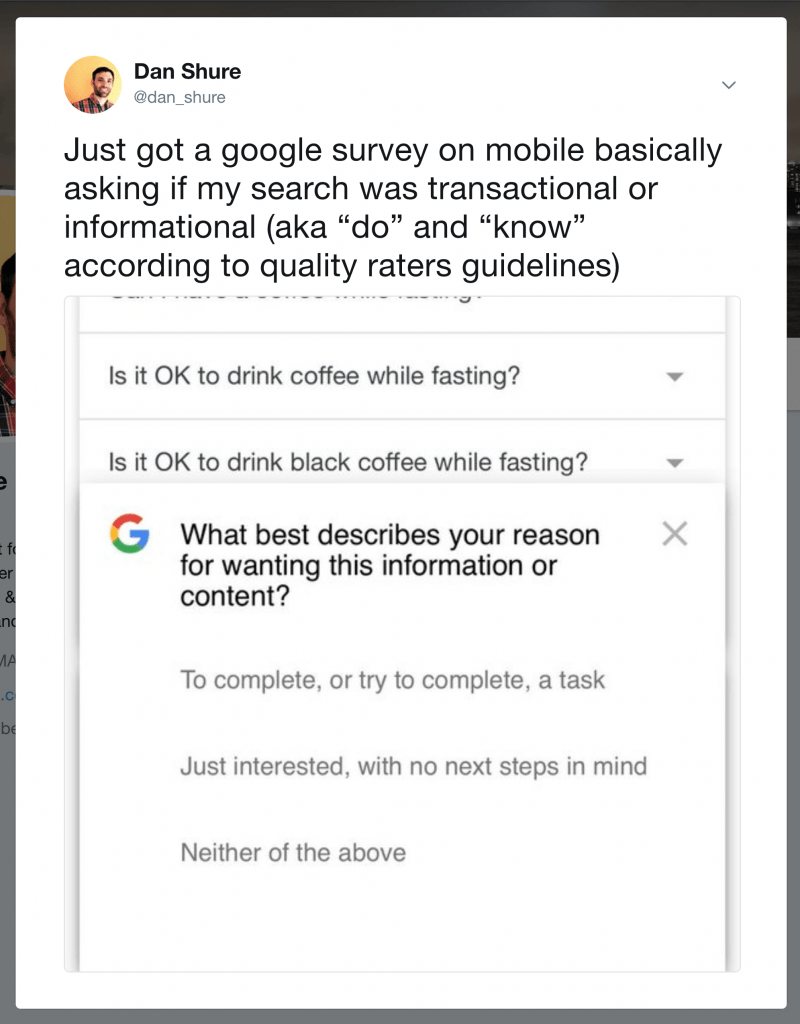

This post also covered an interesting thing: Google has started to solicit user feedback on their intent:

The bottom line is this:

Understanding query intent of a searcher is important, but understanding what Google thinks is the query intent of a searcher will get your content ranking in the top spots.

Another Featured Snippet Post

https://ahrefs.com/blog/find-featured-snippets/

Ahrefs.com has some pretty compelling data about featured snippets.

TL;DR — you’re taking a 31% traffic hit by having the #1 spot but also NOT the featured snippet.

Ranking in 2nd-10th?

So this post is very Ahrefs-centric, in that it shows you how to find featured snippet opportunities using their tool, but still a valuable post.

Check it out if you’re ready to get your featured snippet game on. This post is less about telling you HOW to optimize your content for featured snippet domination (just Google it, there’s about 10,000 posts), and more for how to find the opportunities to go after.

…it’s then a case of trying to understand WHY you don’t own these snippets and doing everything in your power to rectify the issue(s).

Here are a few issues and solutions that, while not guaranteed to help, have apparently “worked” for others:

- Your competitor’s answer is “better” than yours

- You have structured markup issues

- Your content doesn’t adhere to the format searchers want to see

A Cryptomining Script Study by Ahrefs

https://ahrefs.com/blog/cryptomining-study/

Ahrefs did an interesting study recently about the number of sites using a script to mine cryptocurrency by borrowing (without your consent–“borrowing” used pretty loosely here) some of your computer’s processing power while you’ve got the site open in your browser.

So how many sites did Ahrefs find using a cryptomining script?

We found 23,872 unique domains running cryptocurrency mining scripts.

As a percentage of the total 175M+ in Ahrefs’ database, that’s 0.0136%. (Or 1 in 7,353 websites.)

The study found some interesting things, such as:

– some of the top sites (as measured by SEO authority) that use cryptomining scripts allow user-generated content, such as Medium.com

If you’re interested in alternative monetization methods, cryptocurrency, or studies that use a huge chunk of data, check out the full post.

If you have a site that relies on organic traffic and you’re thinking about using such a script on your site, here’s probably the most important part of the article (even though it’s speculation):

There has even been rumours in the past that Google might block websites with crypto-mining scripts in Chrome (a browser with ~58% market share). Bottomline: installing crypto-mining scripts simply isn’t worth the risk for high-profile websites.

So, tread carefully there.

Real Talk: Keyword Search Volume

https://ahrefs.com/blog/keyword-traffic-estimation/

Nice article from Ahrefs on what searches per month actually means with keyword research.

Obviously no one gets close to the amount of data Google has with regards to keyword traffic, but as these things go, Ahrefs has access to more info than most of us ever will. So when they put out an article on something like the realities of search volume, it’s a good idea to listen up.

I’m sure every professional SEO has noticed that ranking a page for a high-volume keyword doesn’t always result in a huge amount of traffic. And the opposite is true, too—pages that rank for seemingly “unpopular” keywords can often exceed our traffic expectations.

This is something that not everyone realizes, so I wanted to highlight it here as something to keep in mind/something one should be reminded of often.

Just because you rank for a keyword doesn’t mean you will get some high percentage of click throughs/traffic from ranking for that term. There are a number of reasons they identified that could be the cause, from obvious ones like ads taking away clicks from the top spot, to less obvious one like long tail keyword variations that have never been searched before.

It’s an interesting article–definitely recommend you taking a look.

The Rise and Fall of Featured Snippets

https://moz.com/blog/knowledge-graph-eats-featured-snippets

Featured Snippets: 0

Knowledge Graph: 1

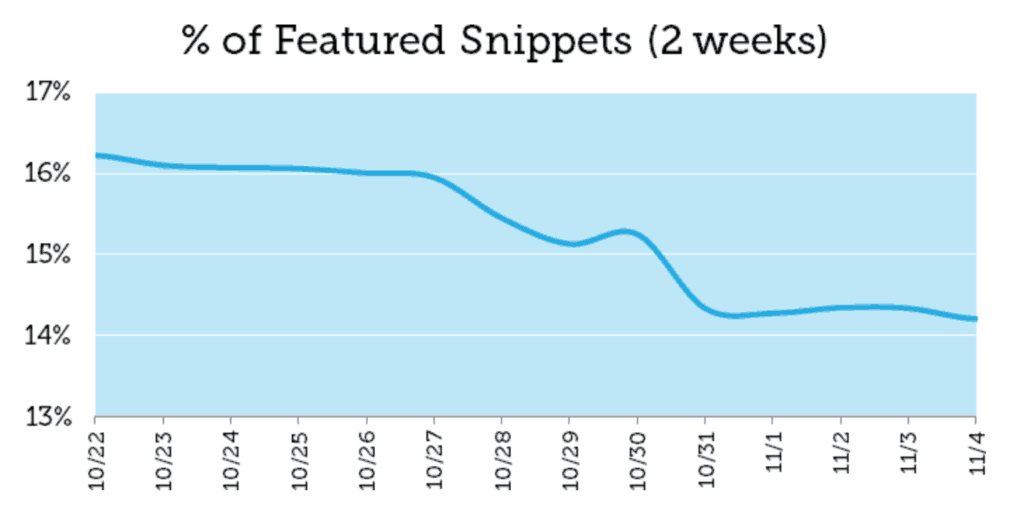

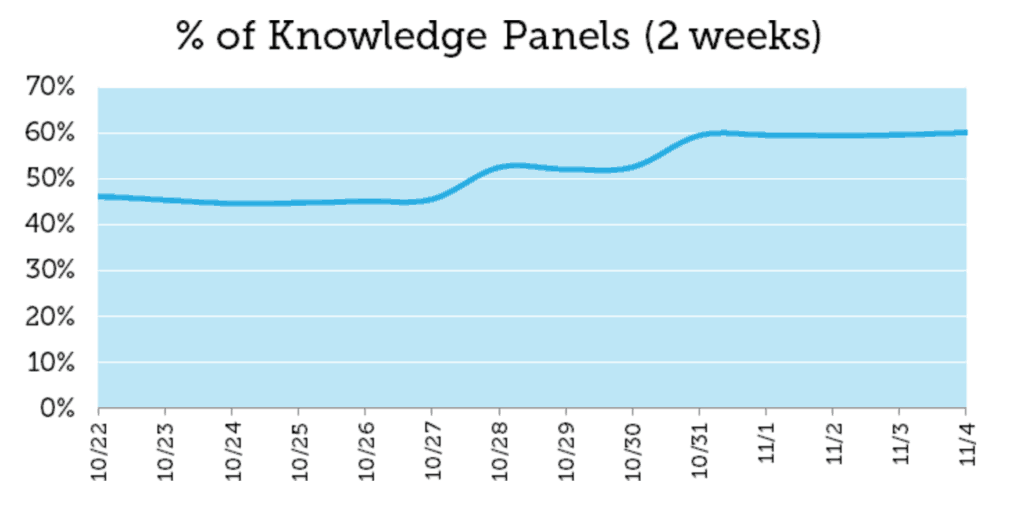

The two weeks encompassing the end of October/Beginning of November saw a pretty significant SERP switch-up.

The number of featured snippets for keywords fell:

And the number of knowledge graph results rose:

We’ve highlighted several articles in the past on how to win the featured snippet for your keywords. Many agencies and businesses have put a lot of energy and resources into winning the featured snippet (the REAL #1 ranking). So, you might be asking… WTF, Google?

It’s likely that Google is trying to standardize answers for common terms, and perhaps they were seeing quality or consistency issues in Featured Snippets. In some cases, like “HDMI cables”, Featured Snippets were often coming from top e-commerce sites, which are trying to sell products. These aren’t always a good fit for unbiased definitions. Its also likely that Google would like to beef up the Knowledge Graph and rely less, where possible, on outside sites for answers.

The real winner here is Wikipedia, as they are generally the source of the data for the knowledge panels (and “winner” is used loosely here, as they are not really compensated for being the engine behind knowledge panels. Maybe Sundar Pichai will donate $3 to Wikipedia during this funding drive).

When You Accidentally Block Googlebot

http://www.localseoguide.com/googlebot-may-hitting-bot-blocker-urls/

There are many legitimate reasons you would have some bot-blocking language in your site’s code. The use-case in the article is to prevent your site being scraped. That’s legit.

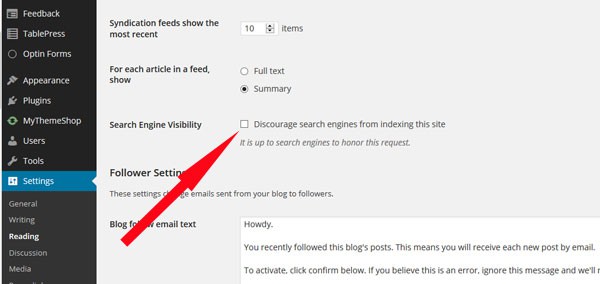

However, I’ve also done the thing where I unchecked and forgot to re-check the “allow search engines to index this site” box and took me way too long to realize…

This article highlights an instance of Googlebot being unintentionally blocked. I definitely recommend the read:

Many sites use bot blockers like Distil Networks to stop scrapers from stealing their data. The challenge for SEOs is that sometimes these bot blockers are not set correctly and can prevent good bots like Googlebot and BingBot from getting to the content, which can cause serious SEO issues. Distil is pretty adamant that their service is SEO-safe, but I am not so certain about others. We recently saw a case where the http version of a big site’s homepage was sending Googlebot to a 404 URL while sending users to the https homepage, all because the bot blocker (not Distil) was not tuned correctly.

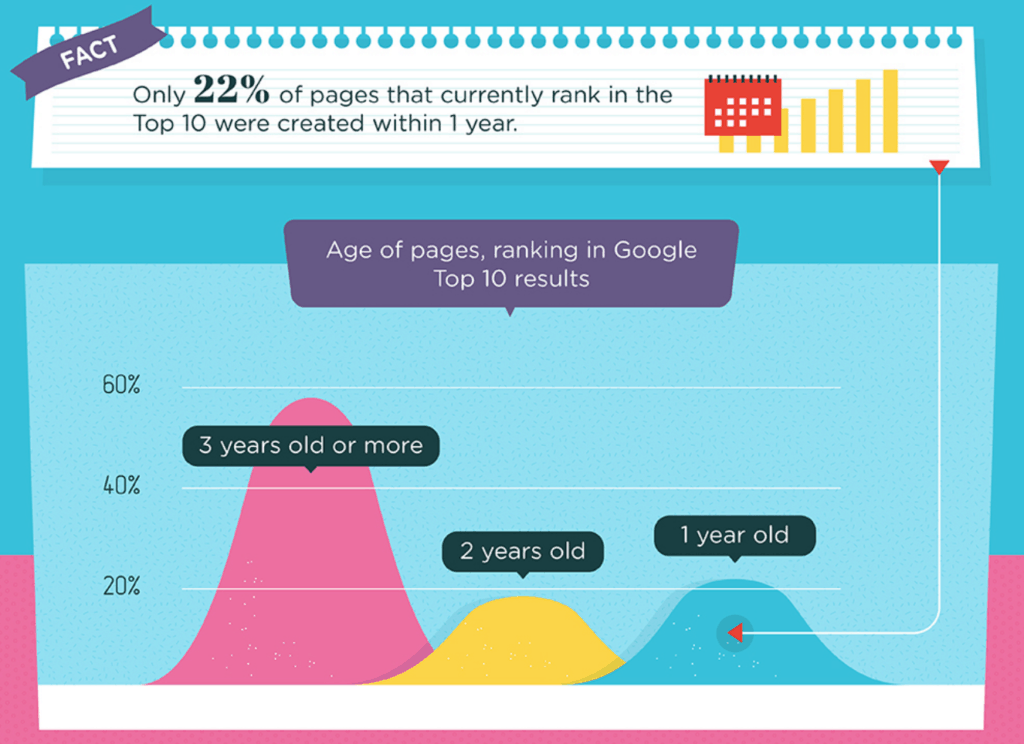

Time to Ranking

http://www.serplogic.com/infographics/rank-google

Hey, shout out to 2015, this post presents its information via infographic.

Why?

Links, probably.

Anyway, since it’s hard to quote from an infographic, I took a screen shot of the relevant section.

As you can probably imagine, one of the top 3 questions we get asked by people interested in signing up with us for our amazing link building abilities, is how long will it be until they start ranking on page one.

The answer to this depends on, like, 1,000 different things (age of the page you’re trying to rank, keyword competitiveness, etc.), but it helps to manage expectations by looking at some data:

TL;DR: Older pages dominate the front page of the SERPs for many keywords. Google has about zero incentive to let newer pages (unproven in trust and authority) slip into the top spot with some clever SEO tricks unless it’s a niche that strives on time-sensitve content. Unfortunately. 🙂

SEO is Not Magic

https://webmarketingschool.com/secret-seo-guaranteed-results/

But like fixing a broken engine or planting a vinyard, there’s a very big difference between being able to do it well, and just bullshitting everyone (and yourself) about how easy it is/how good you are.

We’ve always been pretty clear that SEO can be distilled down to a few things, and this post does a good job of summarizing that:

On Site / Technical SEO boils down to making your website easy for Google to crawl, and understand.

Content that appears on your website must be worthwhile, relevant, interesting and adds value to Google’s index.

Authority, which you can call PageRank, backlinks, link equity or any number of other terms, is the primary way Google sorts their results.

While each of those three things may have hundreds of factors to consider, that’s it:

There is no fourth category only revealed to the illuminati.

There is no secret handshake with Google engineers.

There is no “Secret Sauce.

Of course, there’s a lot unmentioned under the heading, of, say, “authority,” that isn’t covered.

Are you still building links in forum posts? Or do you have a sophisticated set-up of sites to test SEO theories, or a network of editors and sites where you can publish good content and get good links?

Not to push the hard sell… let’s call it the medium sell? We’re good at what we do here, and while SEO is not magic, neither is a tonsillectomy, but that doesn’t mean you should do it yourself!

Click here to read about how we can help.

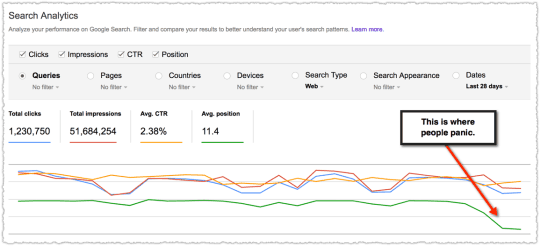

Why Your GSC Position Data (Probably) Dropped

http://www.blindfiveyearold.com/analyzing-position-in-google-search-console

This is a post about using Search Console to analyze your position data–some really heavy stuff. Mostly I stick to page optimizations and link building strategies. I don’t dig too deeply into Search Console, but this issue seems like a frustrating one, so hopefully this helps.

Have you ever seen something like this in Search Console:

And reacted like:

There are always lots of reports about whether or not there’s a bug in Google Search Console. Is it a buy or an algorithm update? This post helps show that understanding the position data, and really knowing how to read it, can help easily distinguish between the two.

There’s a simple explanation of what’s going on, and your site (probably) hasn’t tanked:

Google Search Console position data is only stable when looking at a single query. The position data for a site or page will be accurate but is aggregated by all queries.

In general, be on the look out for query expansion where a site or page receives additional impressions on new terms where they don’t rank well. When the red line goes up and the green goes down that could be a good thing.

And one more from the comments on this post:

And that’s why I think understanding how to read this data is so important. If you look at the data and say “it’s a bug” instead of looking at it and saying “whoa, something big just happened” then you’re bound to fall behind.

Why Link Spam is on the Rise Again: Because It Works

http://www.blindfiveyearold.com/ignoring-link-spam-isnt-working

You guys/gals, this is a good post.

Before Panda and Penguin, you could throw crappy links and thin content at your site, and it might rank fine. But if it didn’t, you just got ignored–not penalized.

Post Panda/Penguin, the risk of this tactic carried a huge cost for an established business.

Current incarnations of various algorithms are penalizing less and ignoring more.

Here’s a TL;DR for you (but you should still go read. It’s not THAT long):

So when Google says they’re pretty good at ignoring link spam that means some of the link spam is working. They’re not catching 100%. Not by a long shot.